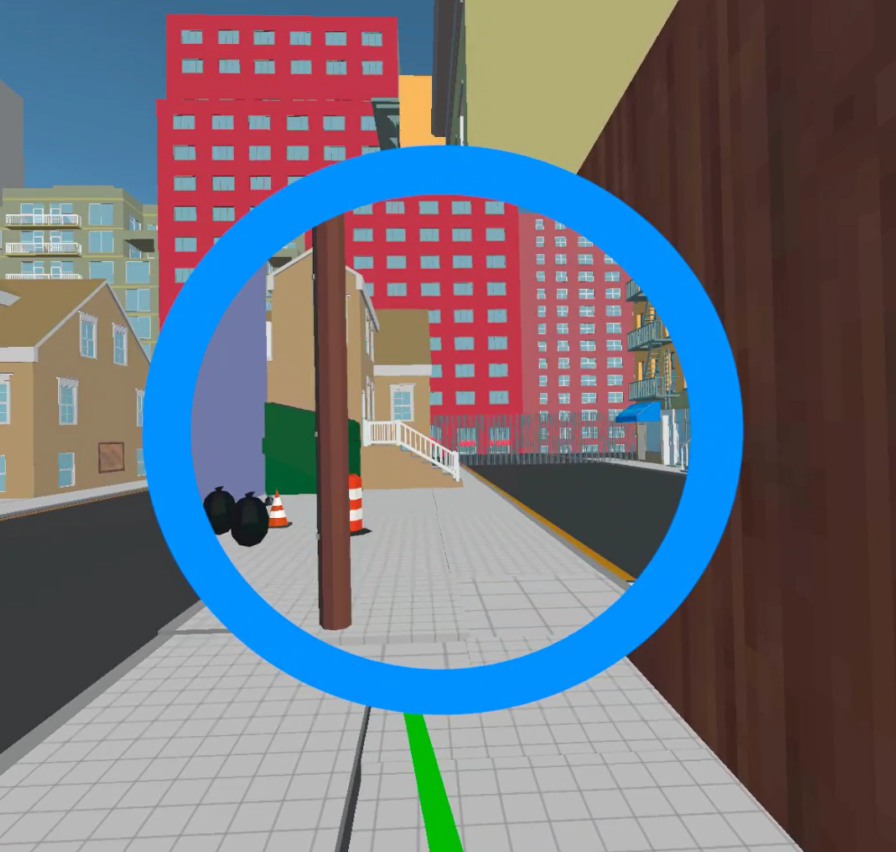

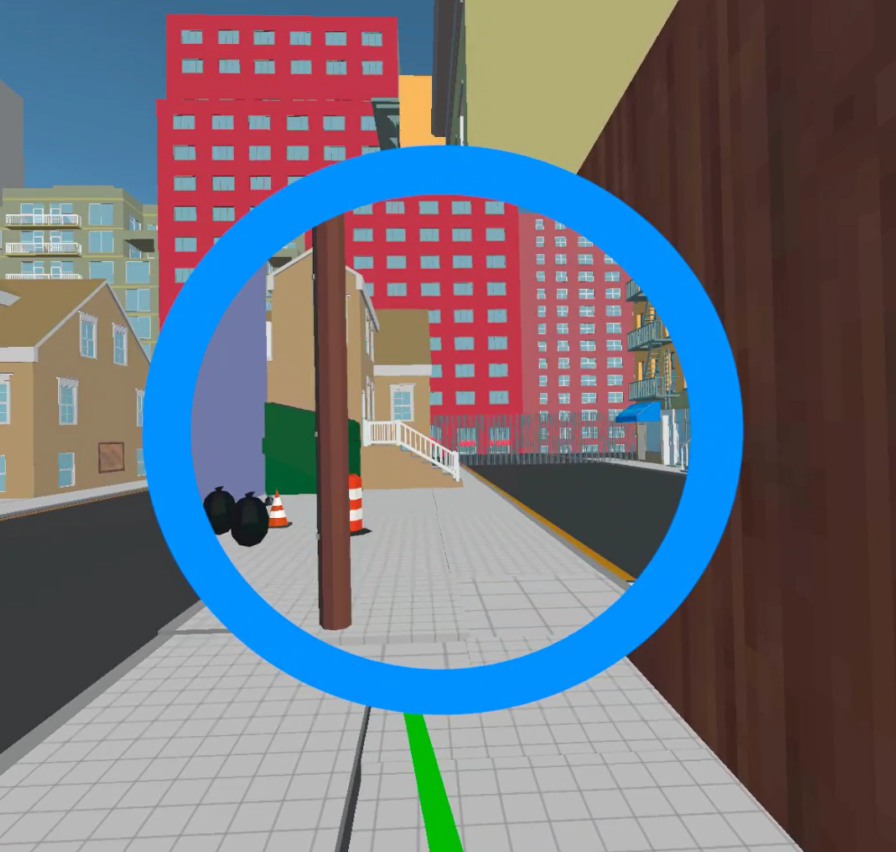

Continuous Travel In Virtual Reality Using a 3D Portal

Andrew Atkins, Serge Belongie, Harald Haraldsson

UIST '21: The Adjunct Publication of the 34th Annual ACM Symposium on User Interface Software and Technology

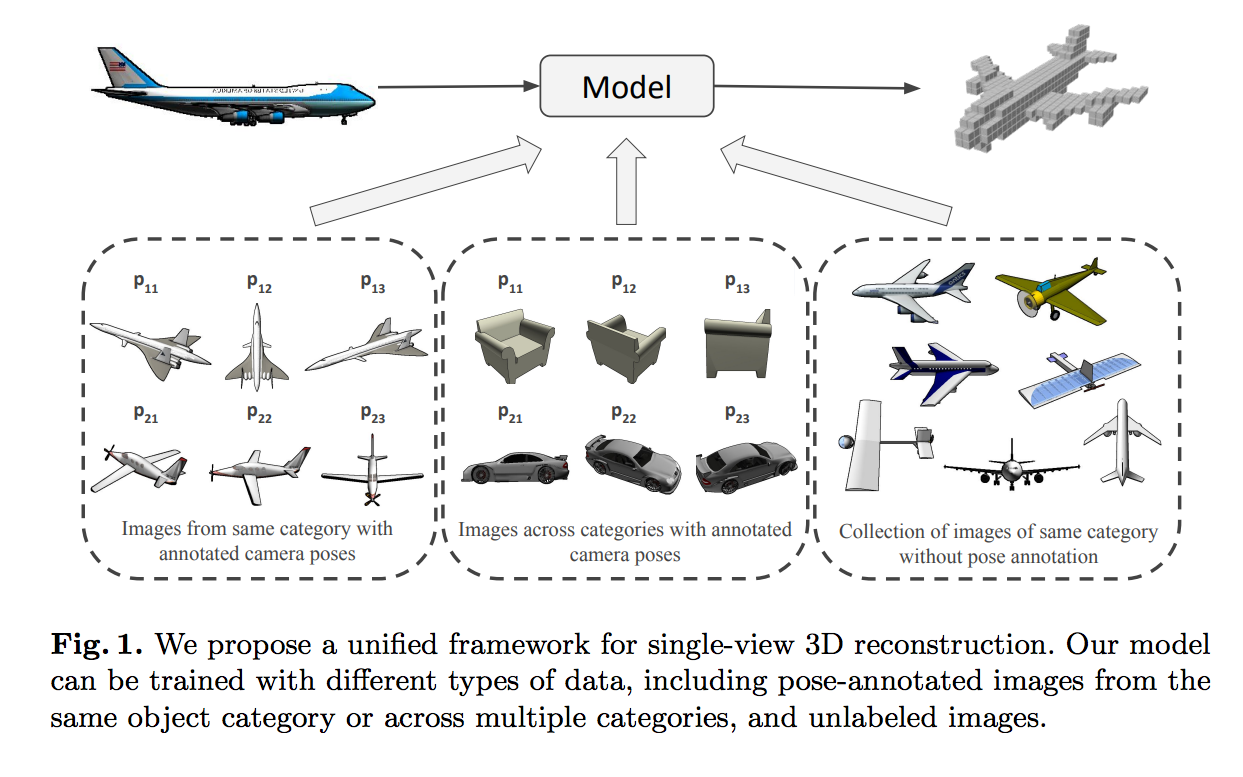

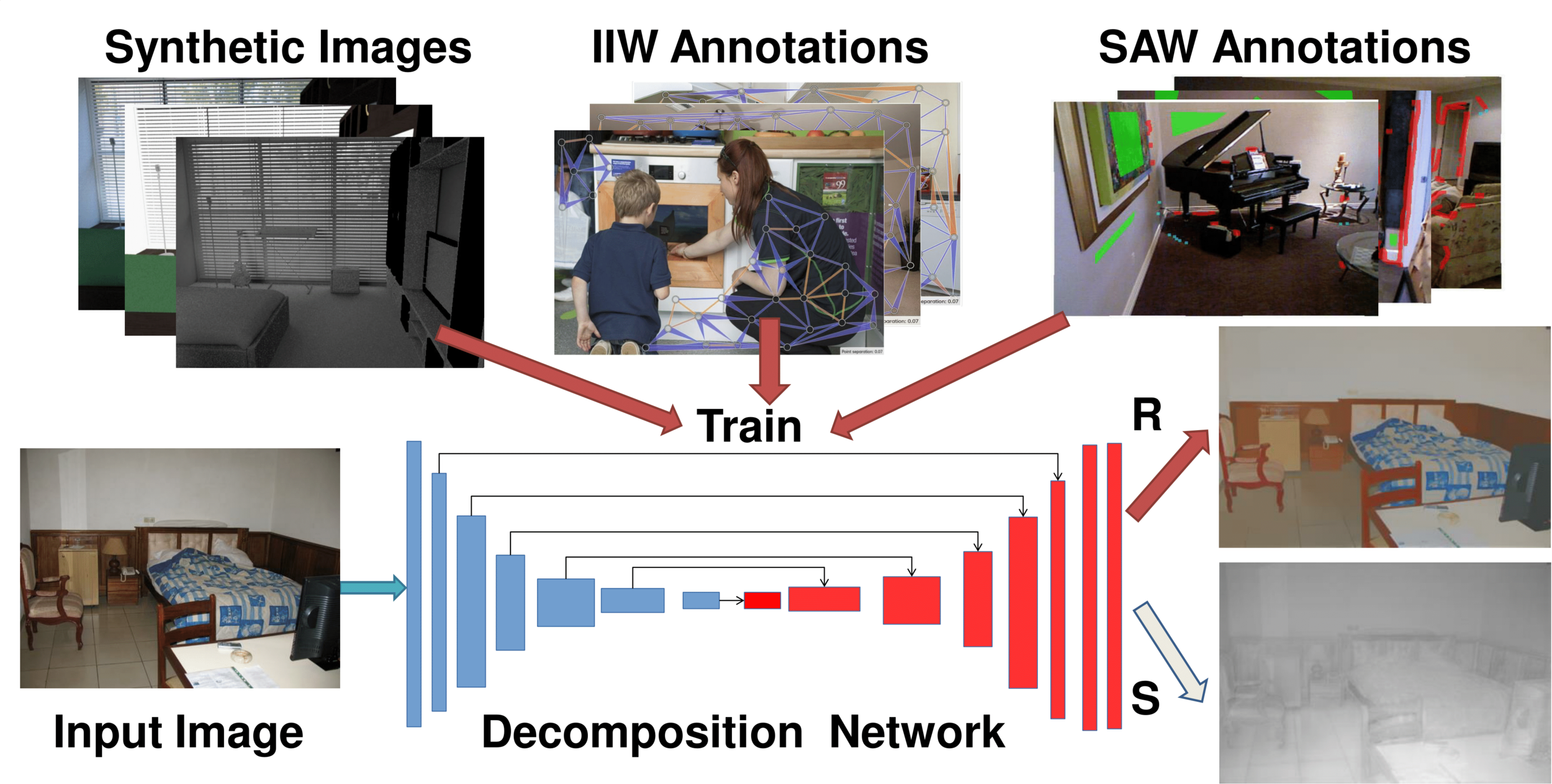

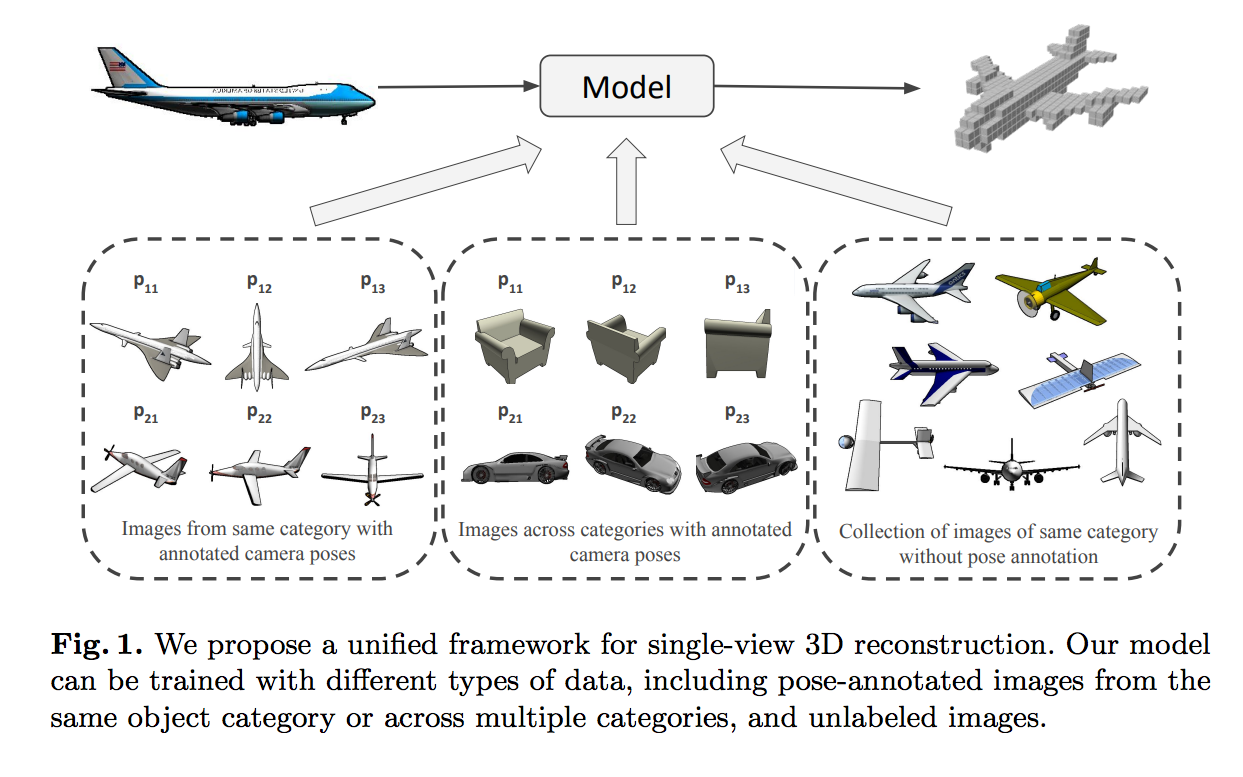

Learning Single-View 3D Reconstruction with Limited Pose Supervision

Guandao Yang, Yin Cui, Serge Belongie, Bharath Hariharan

European Conference on Computer Vision (ECCV), Munich, Germany, 2018.

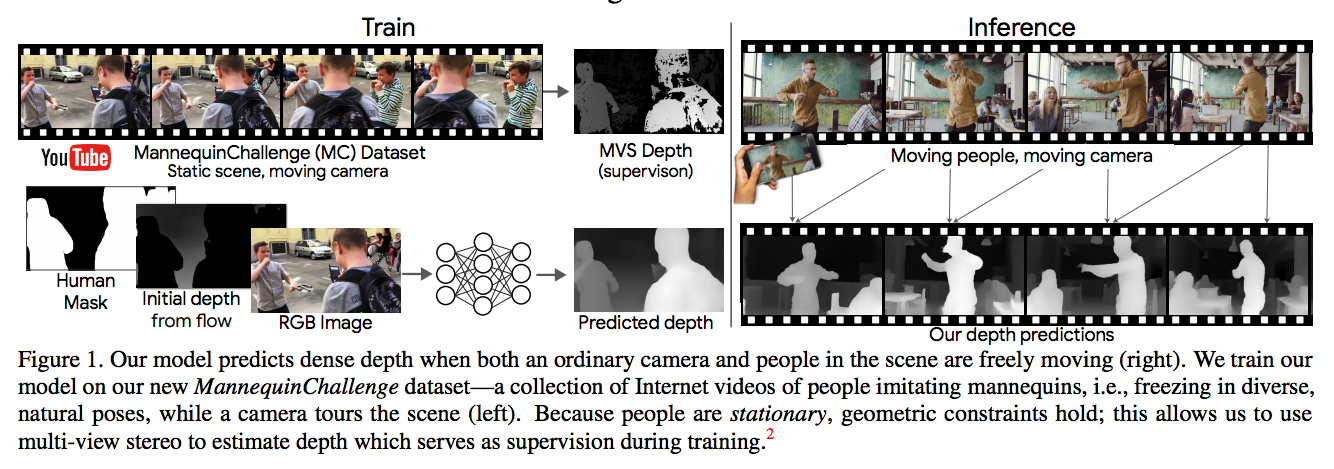

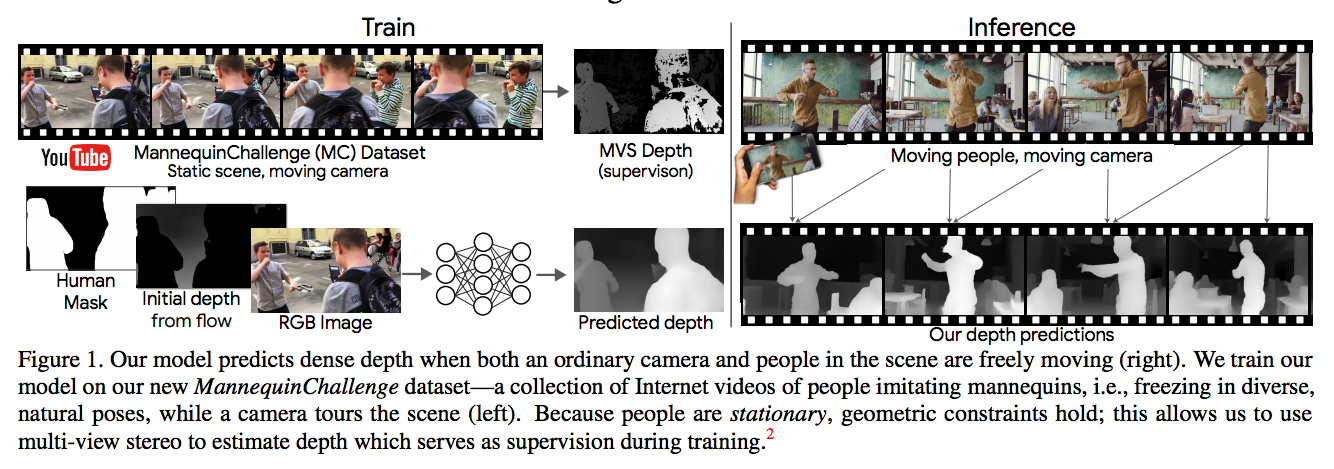

Learning the Depths of Moving People by Watching Frozen People

Zhengqi Li, Tali Dekel, Forrester Cole, Richard Tucker, Noah Snavely, Ce Liu, William T. Freeman

IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, 2019 (Oral)

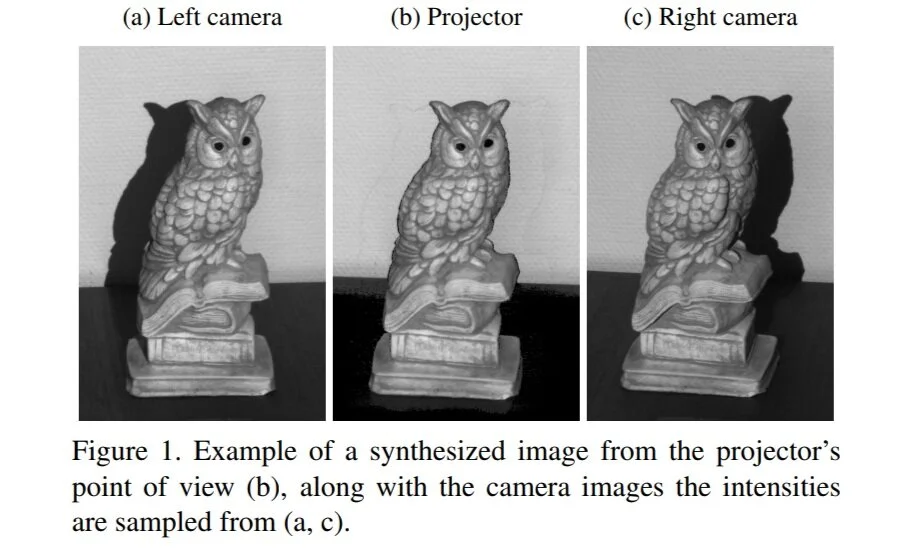

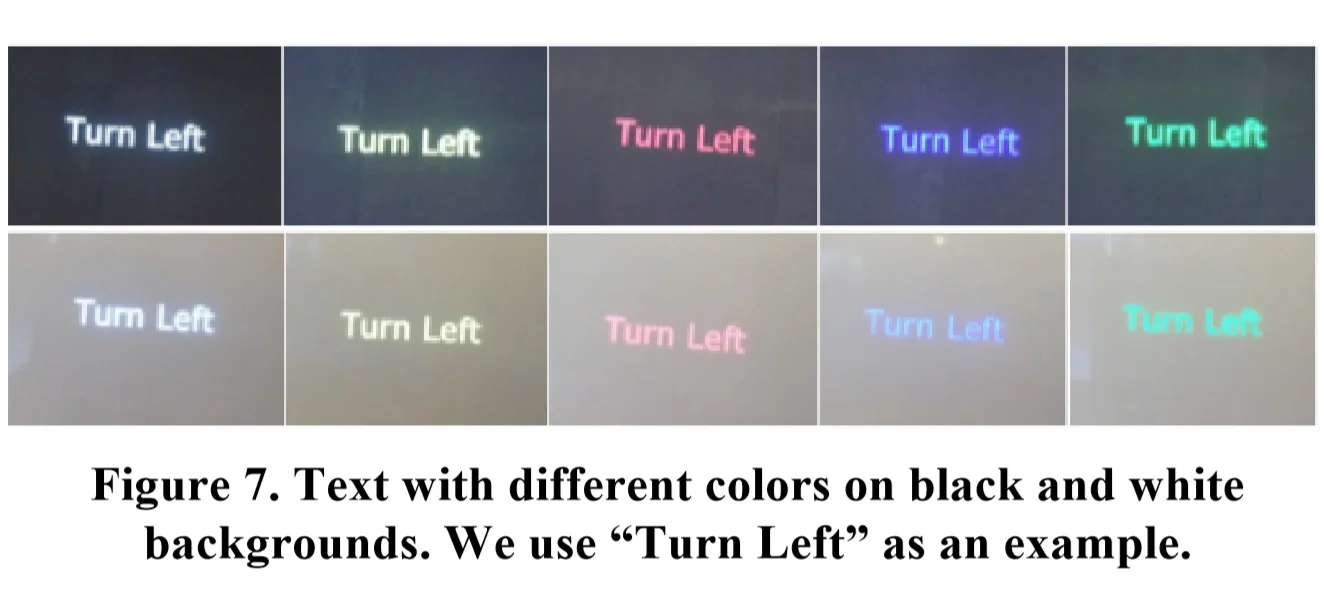

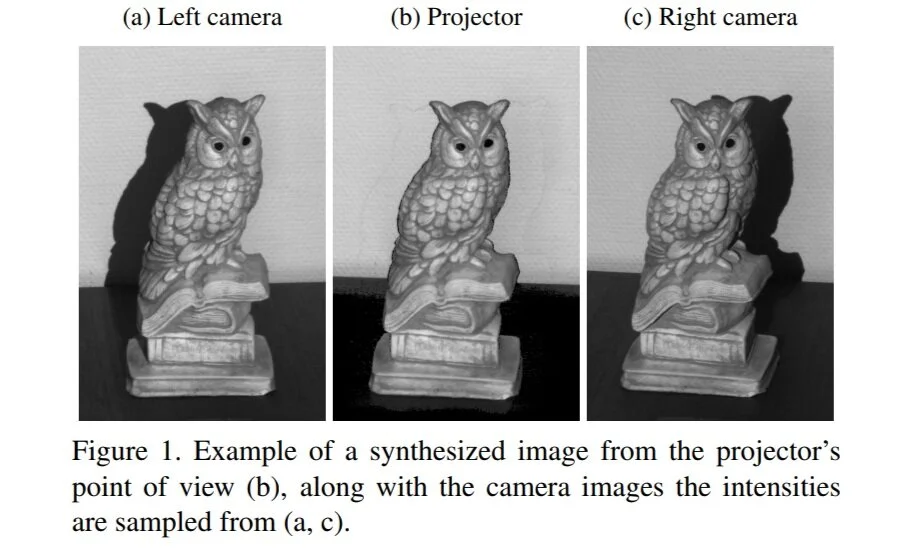

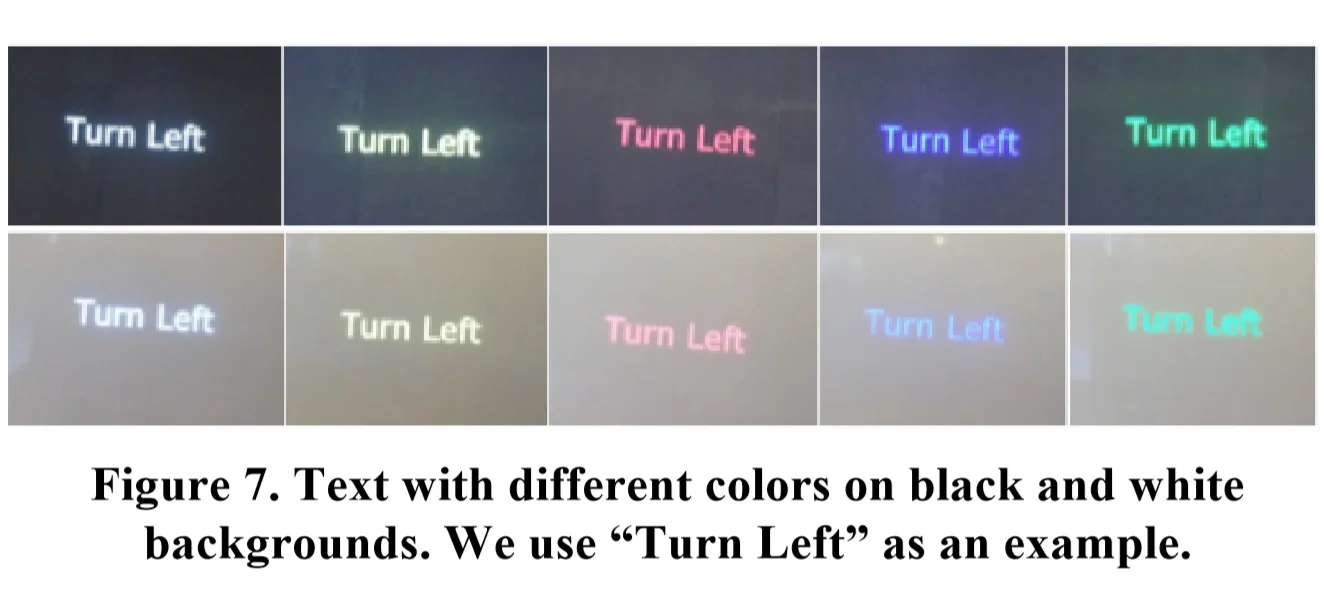

Generating Spatial Attention Cues via Illusory Motion

Janus Nortoft Jensen, Morten Hannemose, Jakob Wilm, Anders Bjorholm Dahl, Jeppe Refall Frisvad, Serge Belongie

CVPR Workshop on Computer Vision for Augmented and Virtual Reality, Long Beach, CA, 2019

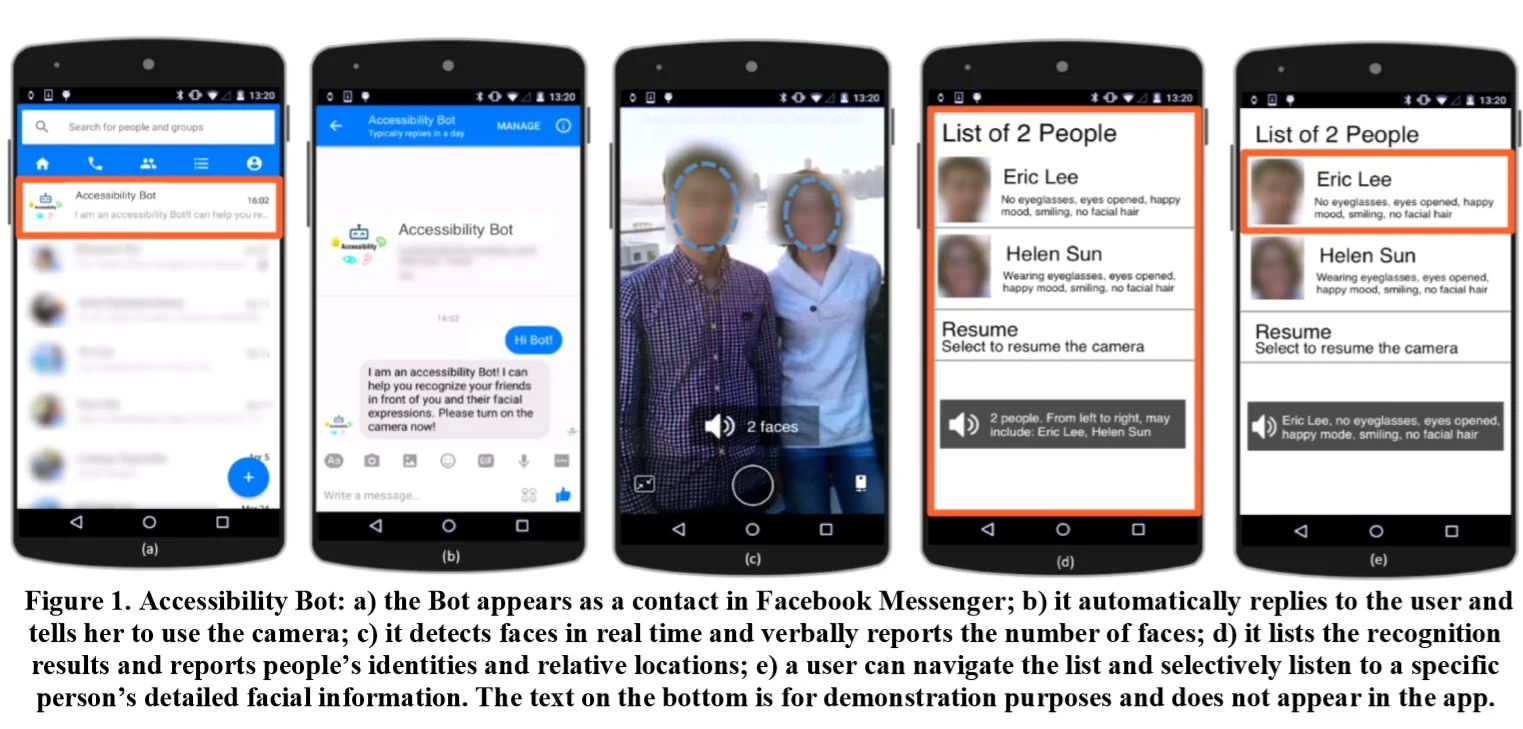

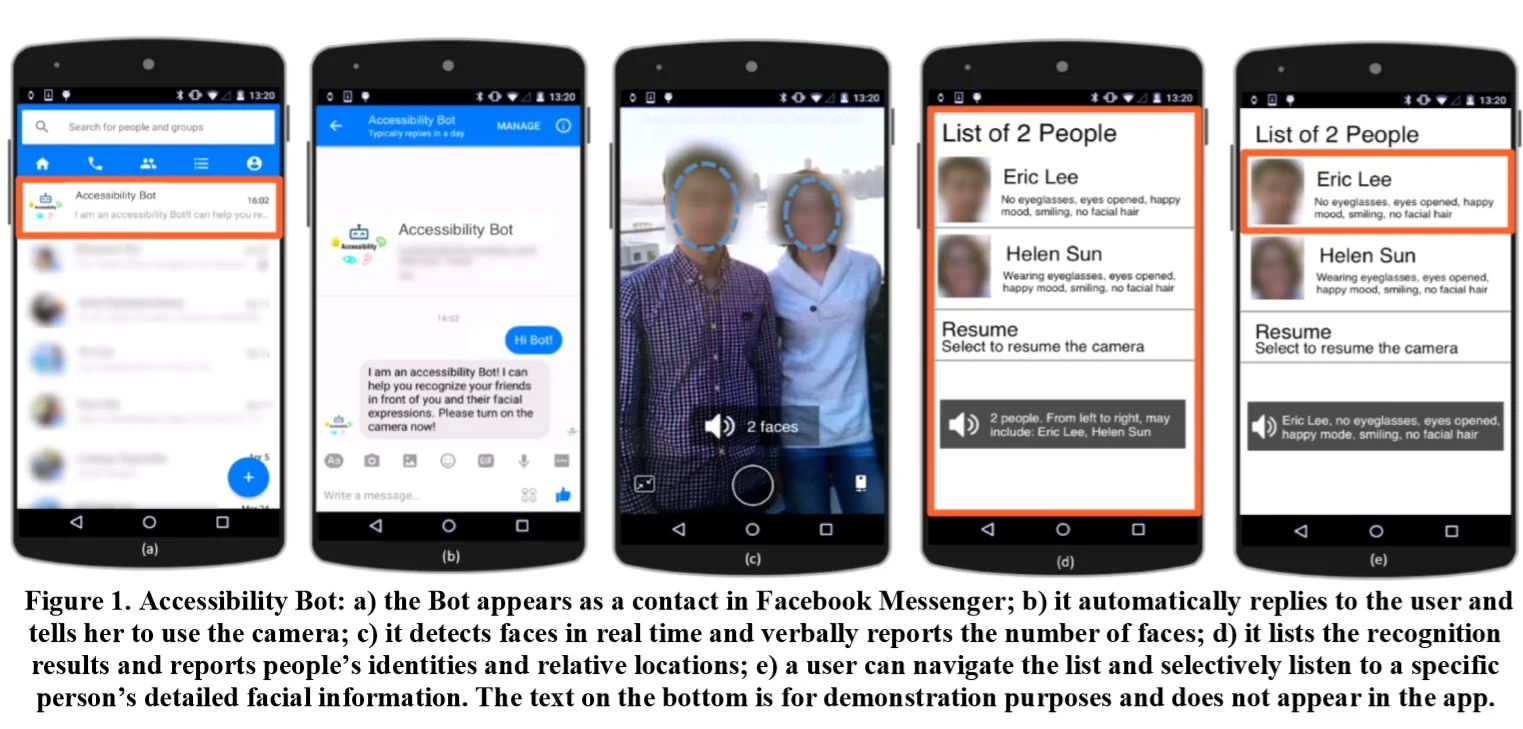

A Face Recognition Application for People with Visual Impairments: Understanding Use Beyond the Lab

Yuhang Zhao, Shaomei Wu, Lindsay Reynolds, Shiri Azenkot

ACM Conference on Computer-Human Interaction (CHI), Montreal, 2018

Understanding Low Vision People's Visual Perception on Commercial Augmented Reality Glasses

Yuhang Zhao, Michele Hu, Shafeka Hashash, Shiri Azenkot

ACM Conference on Computer-Human Interaction (CHI), Denver, CO, May 6-11, 2017

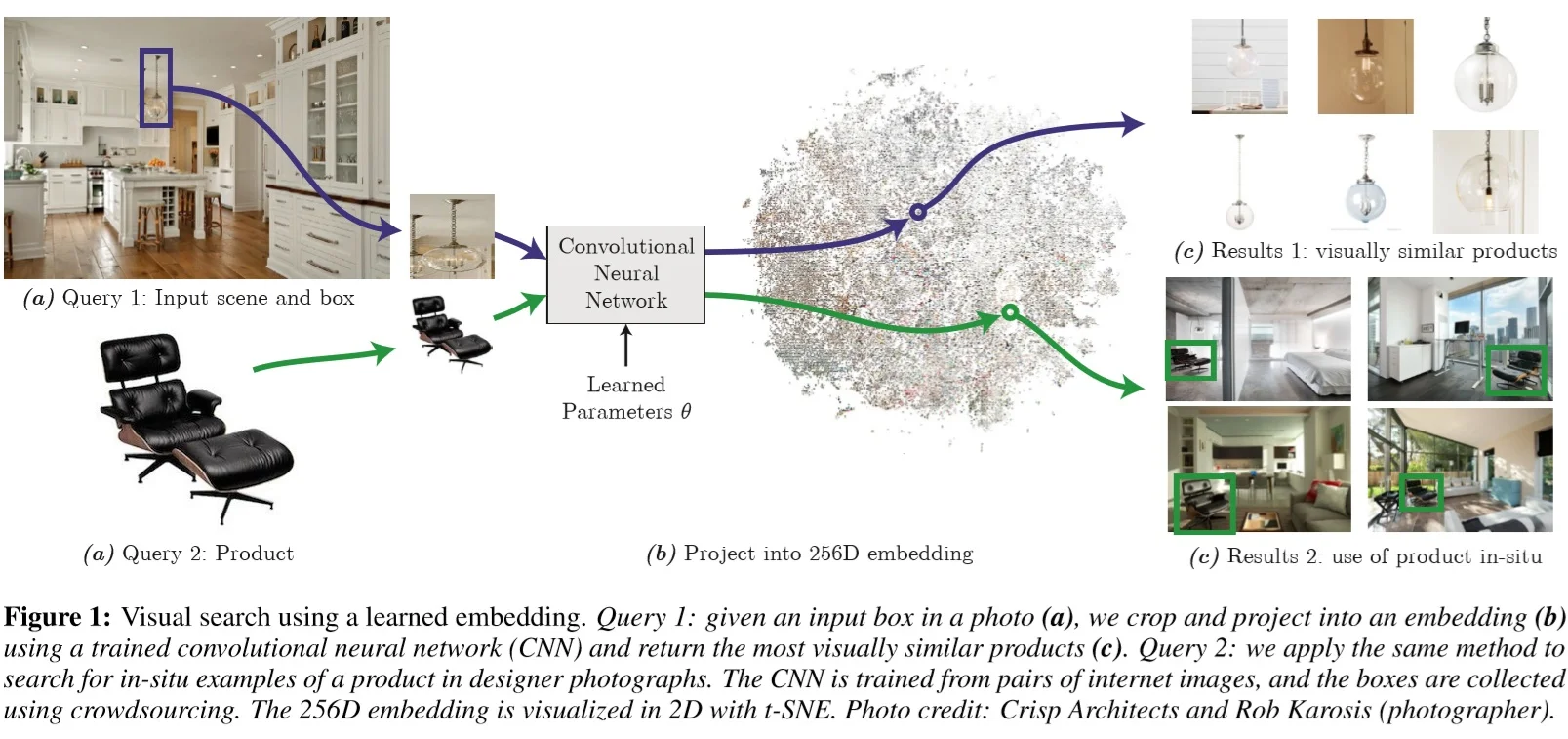

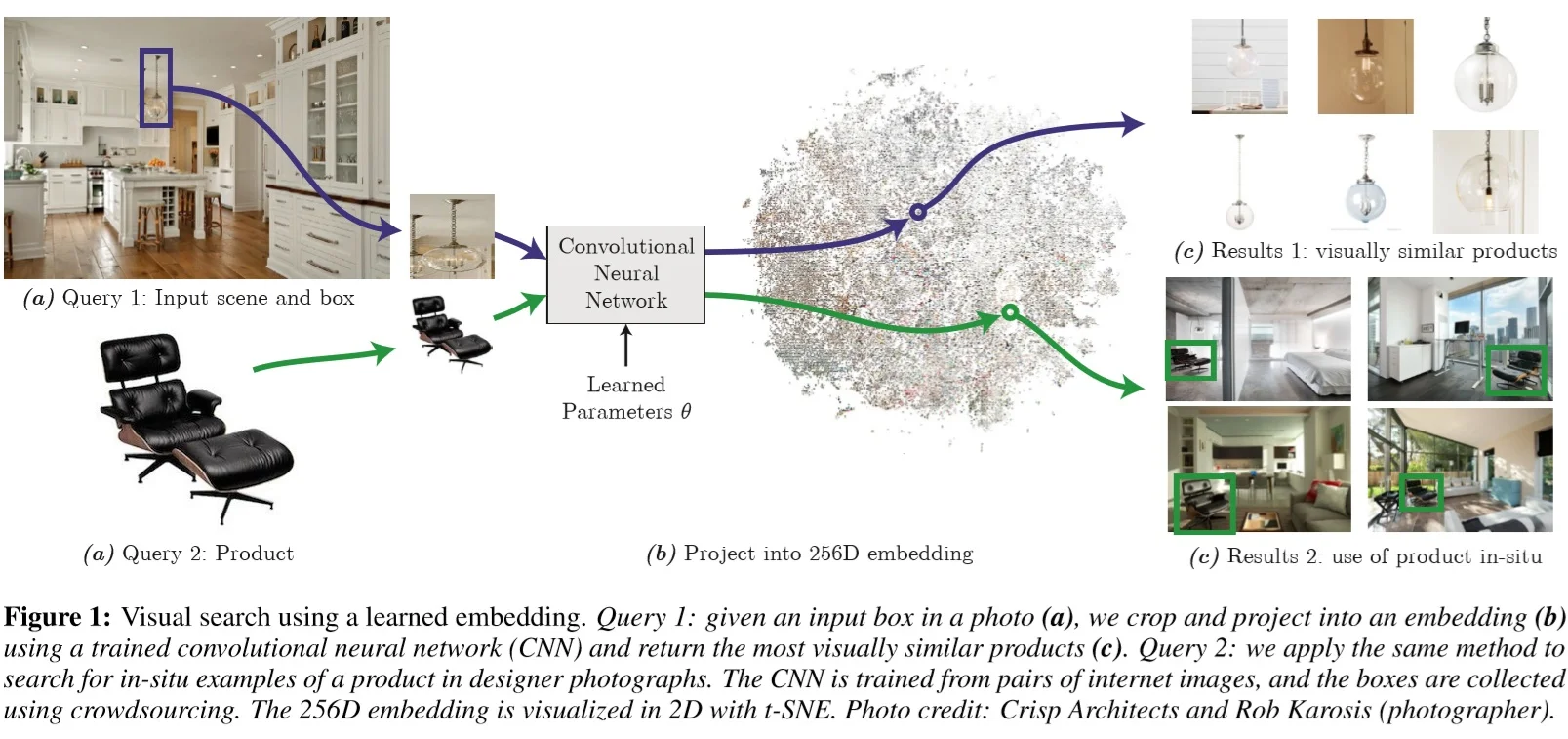

Learning Visual Similarity for Product Design with Convolutional Neural Networks

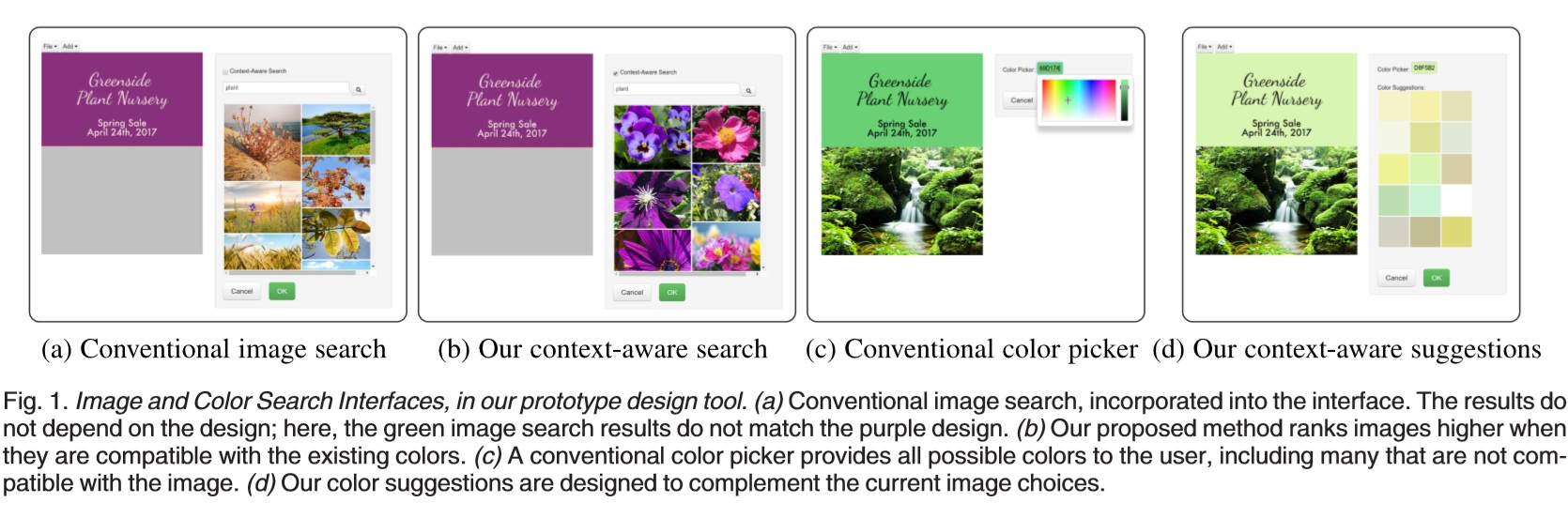

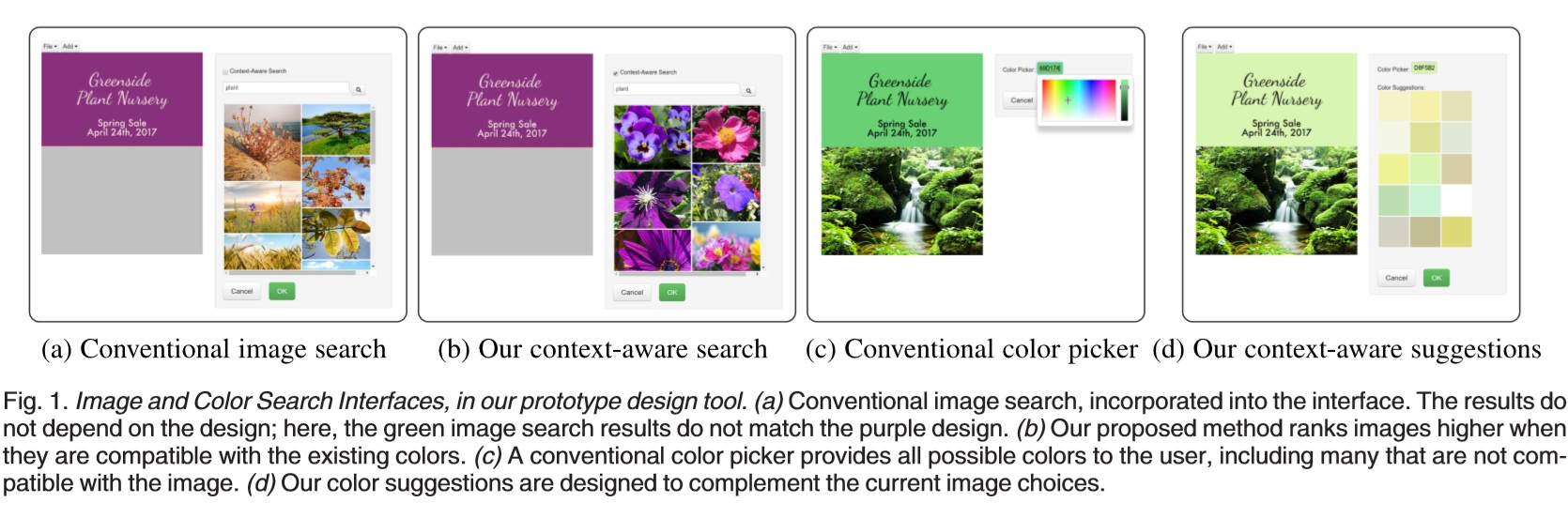

Context-Aware Asset Search for Graphic Design

Balazs Kovacs, Peter O’Donovan, Kavita Bala, Aaron Hertzmann

IEEE Transactions on Visualization and Computer Graphics, 25(7), 2018.

Deep Painterly Harmonization

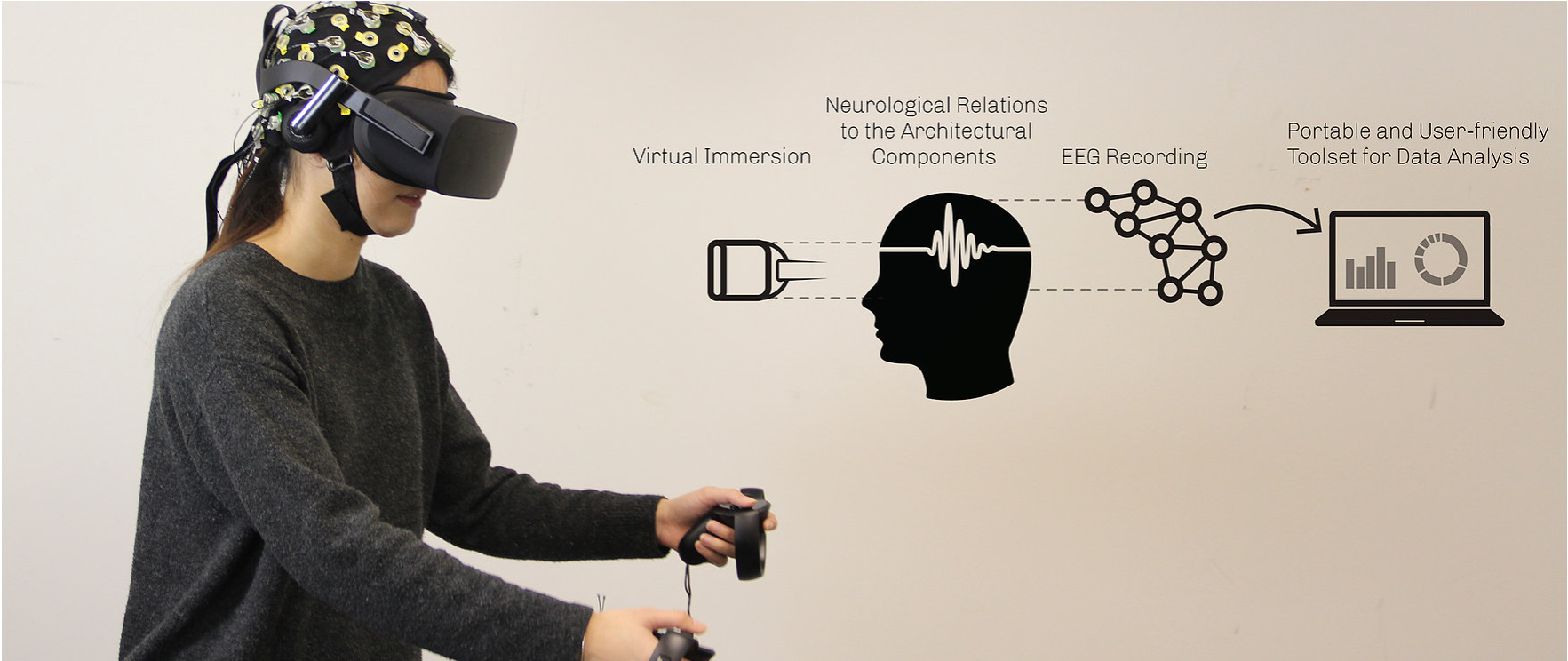

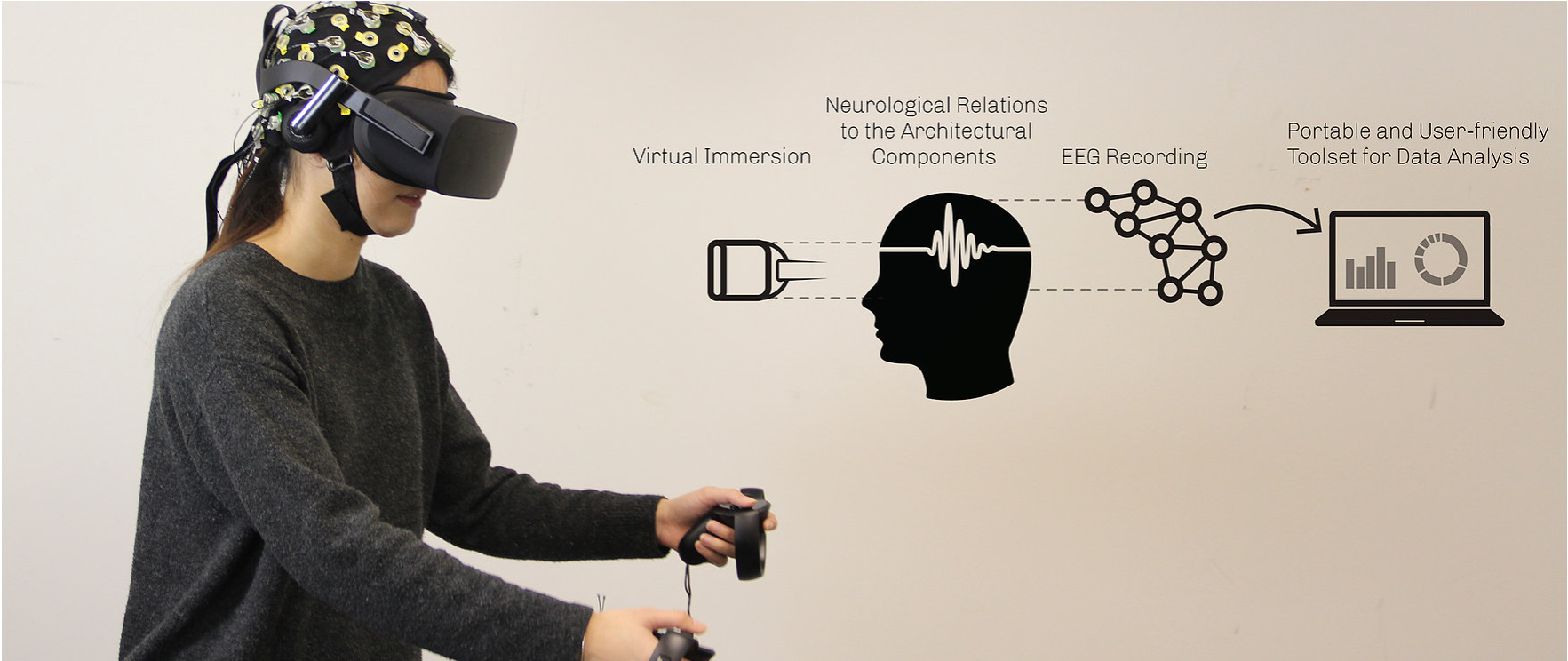

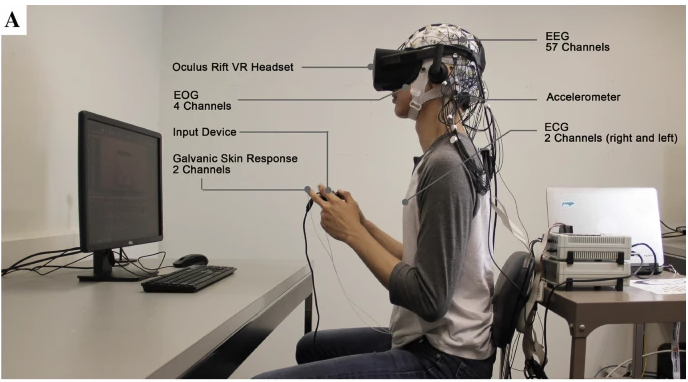

Promoting Human-Centered Architectural Design through Biometric Data and Virtual Response Testing

Principal Investigator: Saleh Kalantari

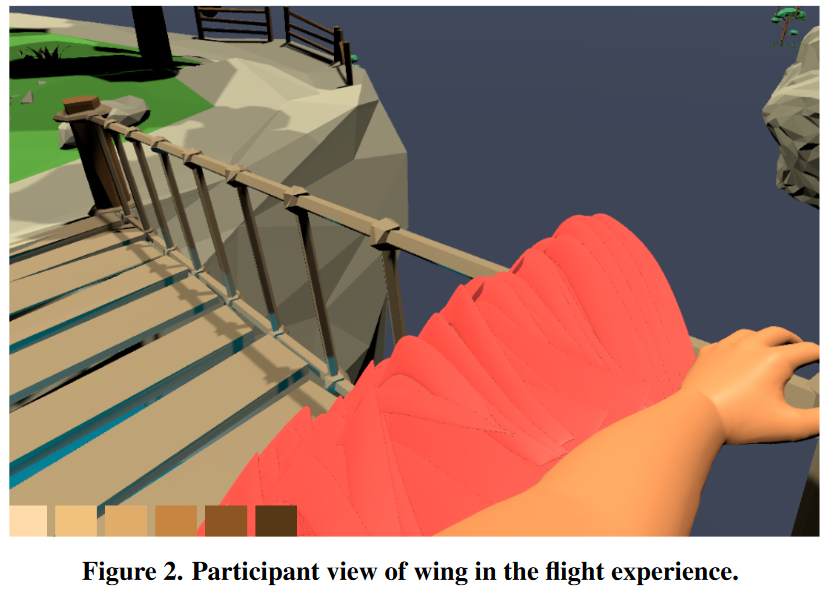

This project uses virtual reality and biophysical measurement to evaluate peoples’ reactions to innovative built environments before they’re constructed. The team is capturing measures of stress, anxiety, and other aspects of well-being, and creating a new interface to evaluate human-experience factors during the design review process.

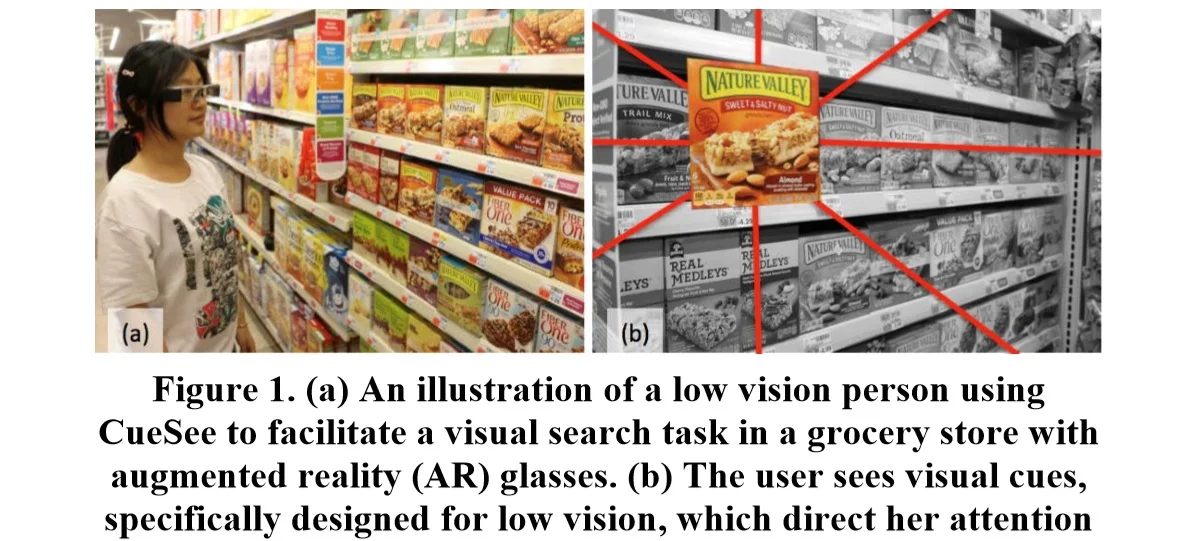

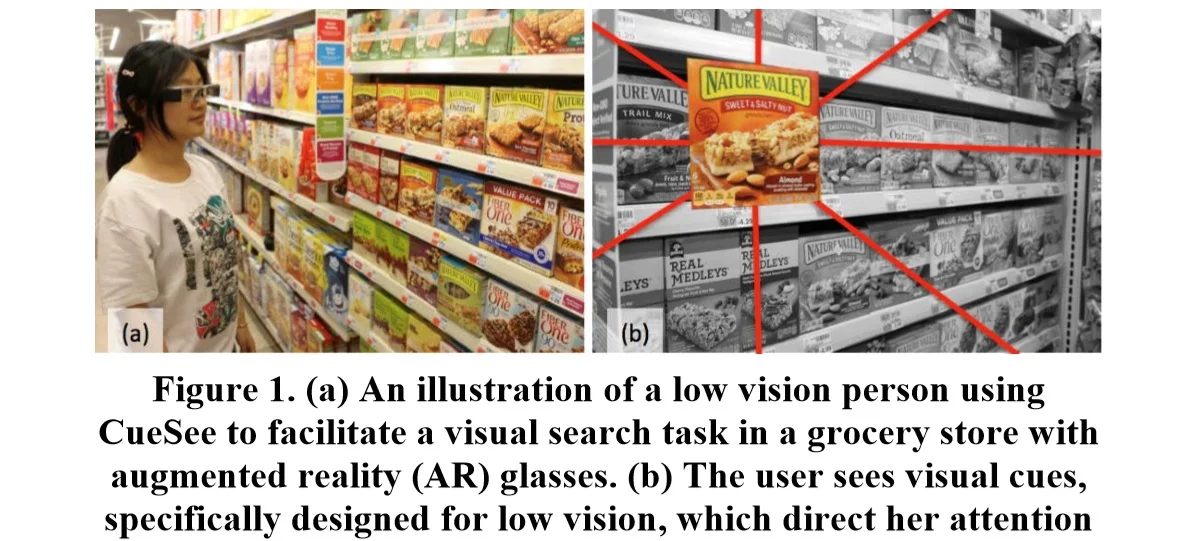

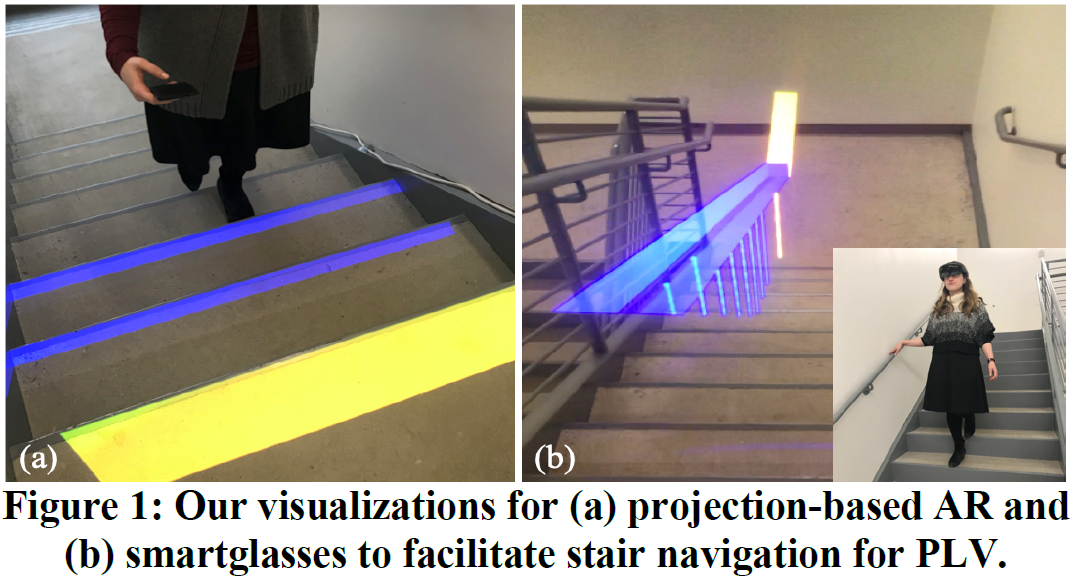

CueSee: Exploring Visual Cues for People with Low Vision to Facilitate a Visual Search Task

Yuhang Zhao, Sarit Szpiro, Jonathan Knighten, Shiri Azenkot

ACM Conference on Pervasive and Ubiquitous Computing (UBICOMP), Heidelberg, Germany, September 2016

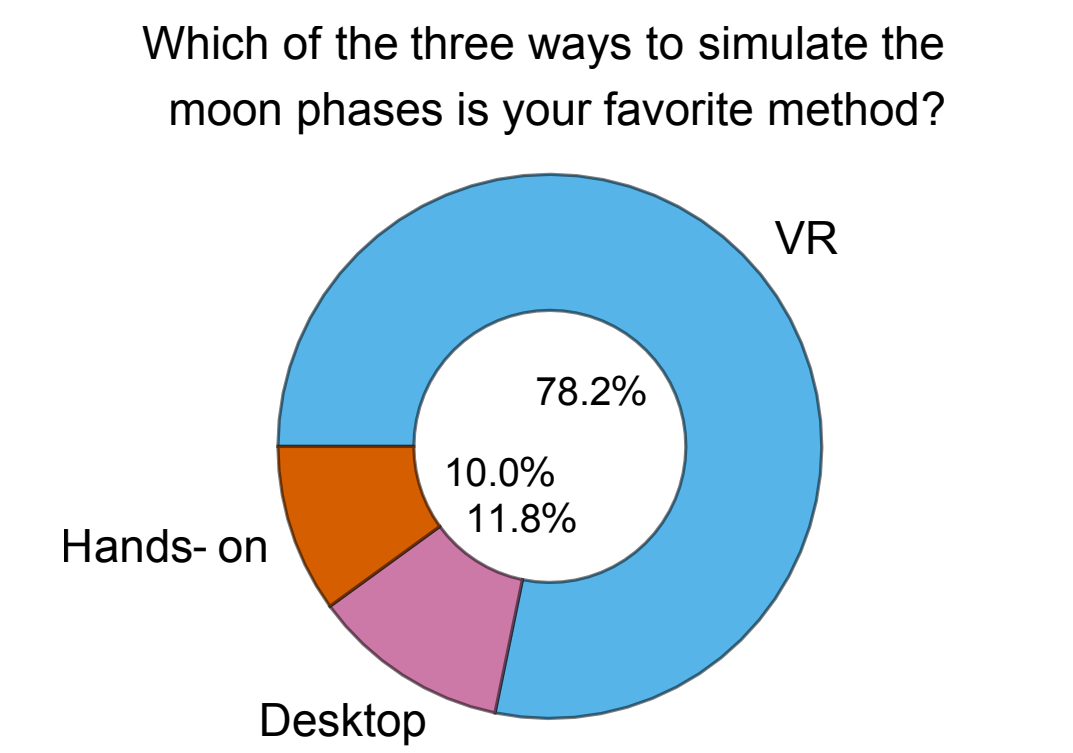

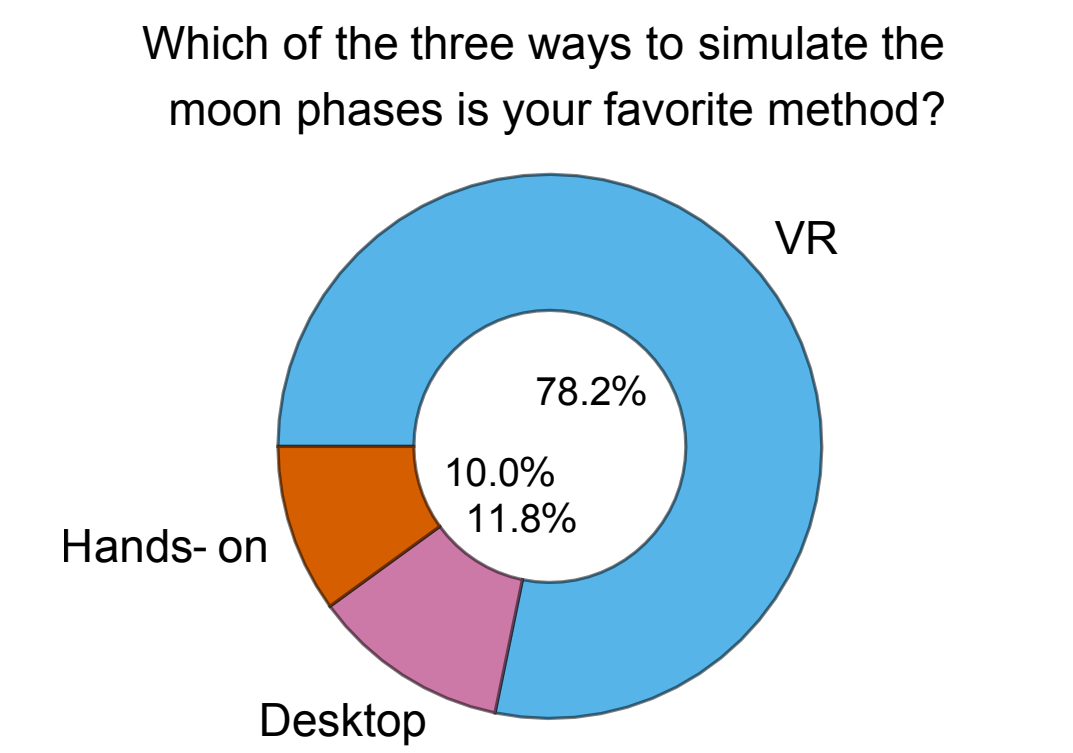

Virtual Reality as a Teaching Tool for Moon Phases and Beyond

Jack H Madden, Andrea Stevenson Won, Jon P Schuldt, Byoungdoo Kim, Swati Pandita, Yilu Sun, T J Stone, N G Holmes

Pre-published on arXiv

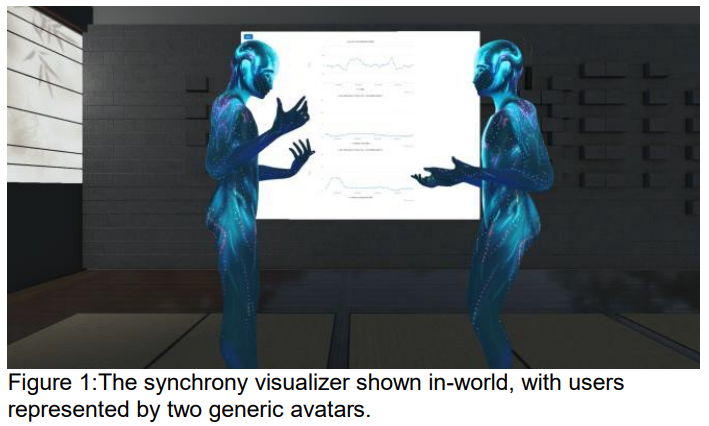

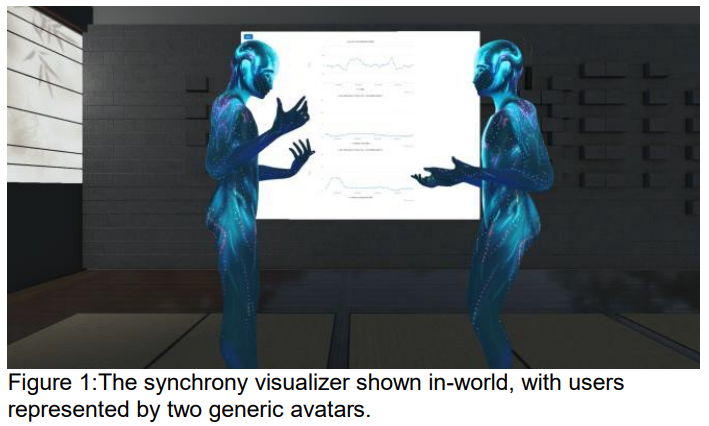

Movement Visualizer for Networked Virtual Reality Platforms

Omar Shaikh, Yilu Sun, Andrea Stevenson Won

IEEE Conference on Virtual Reality and 3D User Interfaces, Reutlingen, Germany, 2018

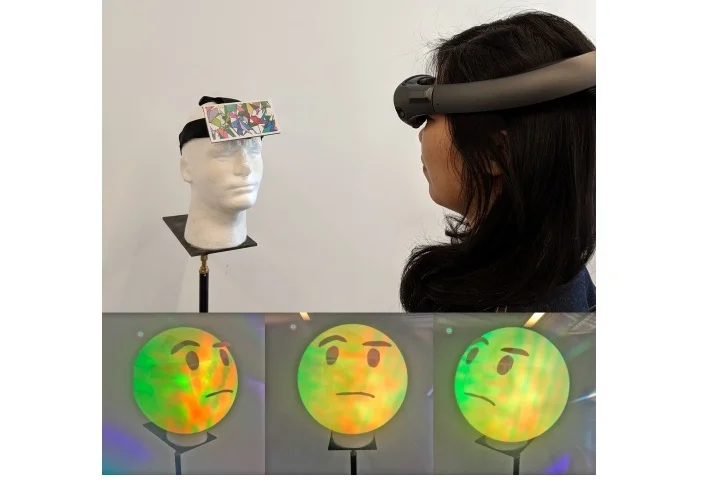

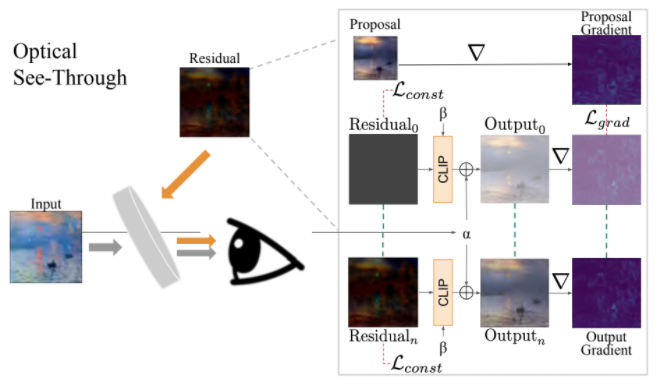

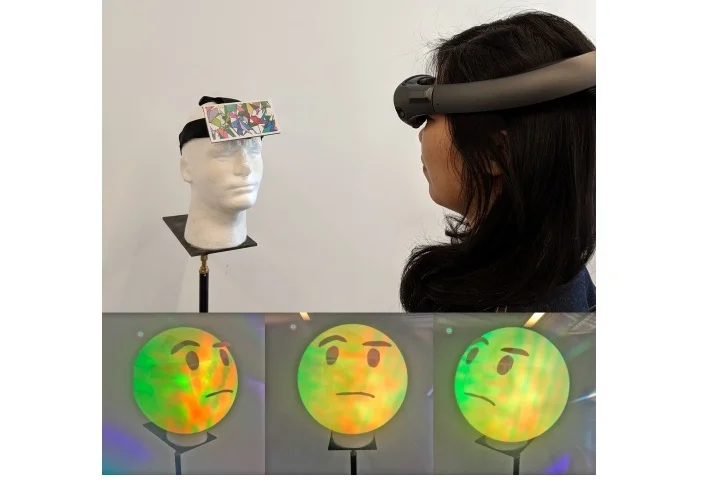

Anon-Emoji: An Optical See-Through Augmented Reality System for Reducing Appearance Bias in Social Interactions

Ran Sun, Harald Haraldsson, Yuhang Zhao, Serge Belongie

CVPR Workshop on Computer Vision for Augmented and Virtual Reality, Long Beach, CA, 2019

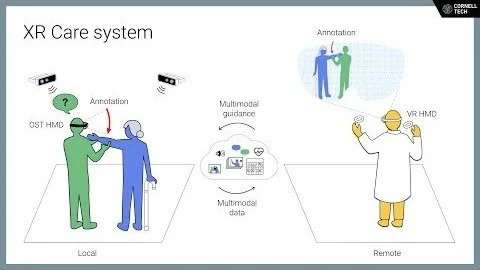

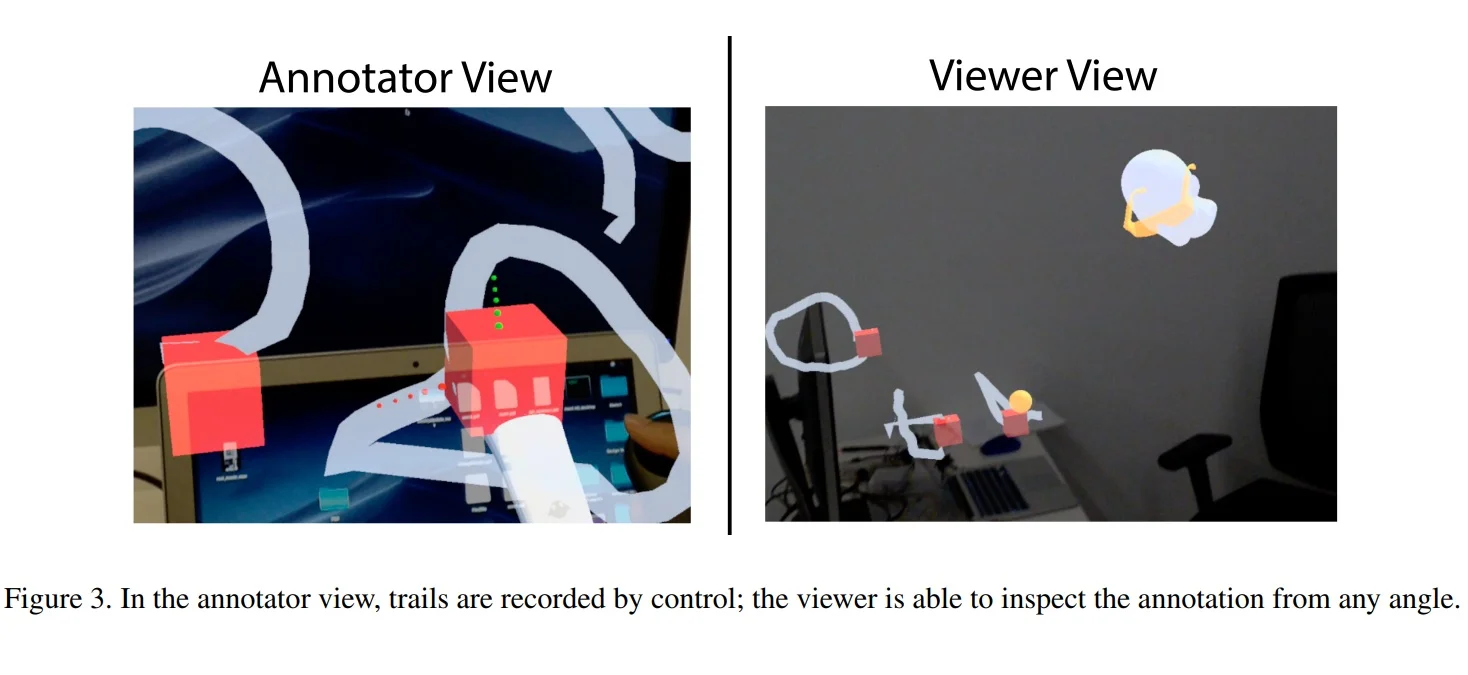

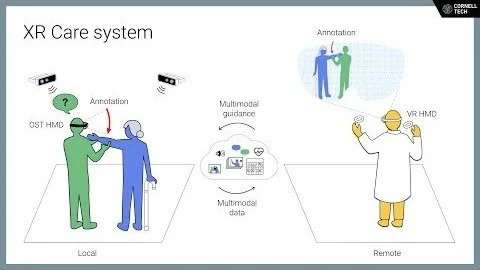

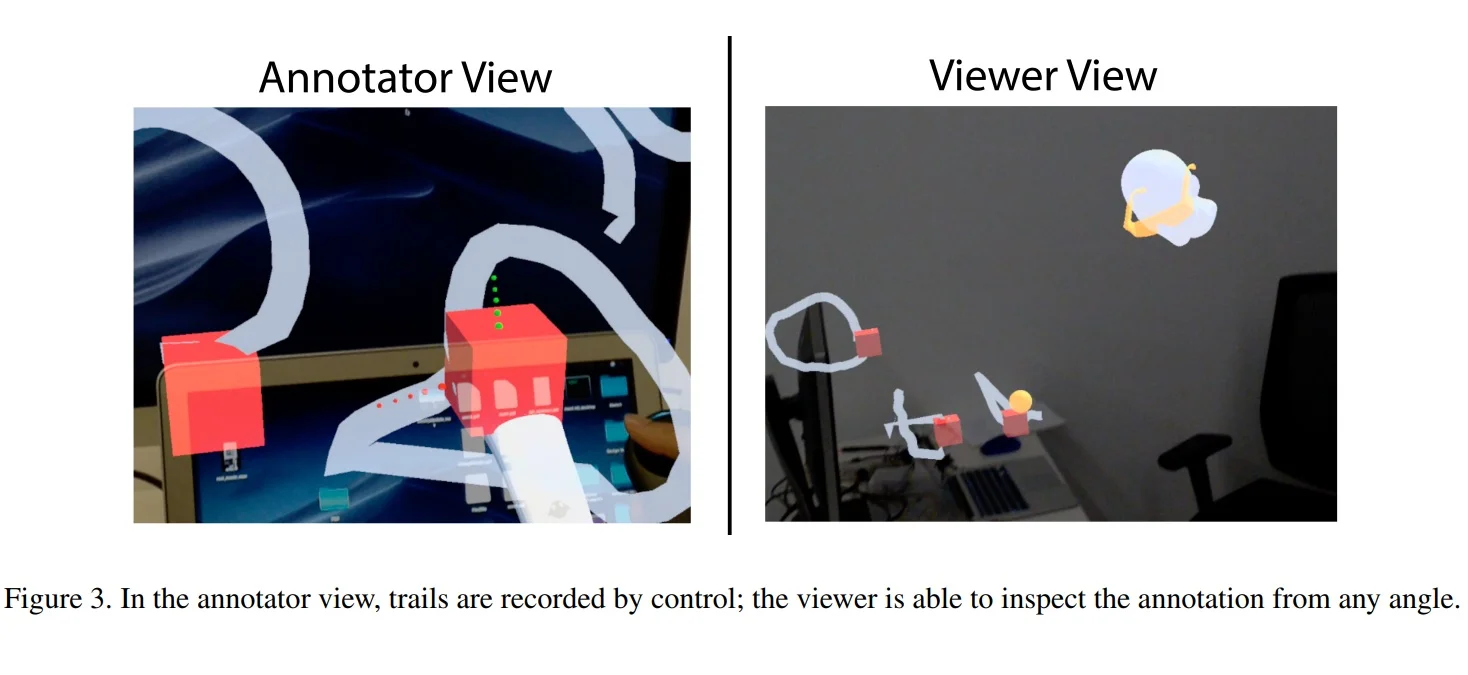

Annotate All! A Perspective Preserved Asynchronous Annotation System for Collaborative Augmented Reality

Po Yen Tseng, Harald Haraldsson, Serge Belongie

CVPR Workshop on Computer Vision for Augmented and Virtual Reality, Long Beach, CA, 2019

Markit and Talkit: A Low-Barrier Toolkit to Augment 3D Printed Models with Audio Annotations

Lei Shi, Yuhang Zhao, Shiri Azenkot

ACM Symposium on User Interface Systems and Technology (UIST), 2017

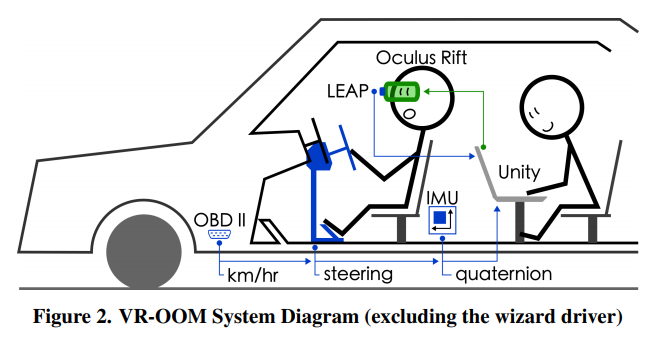

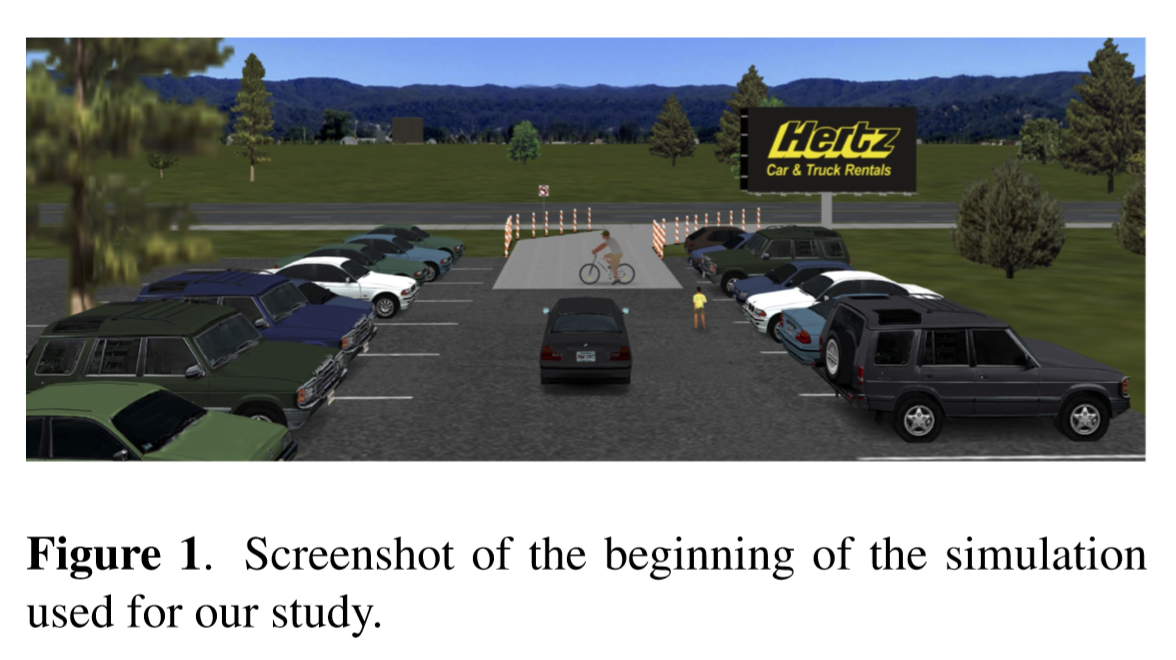

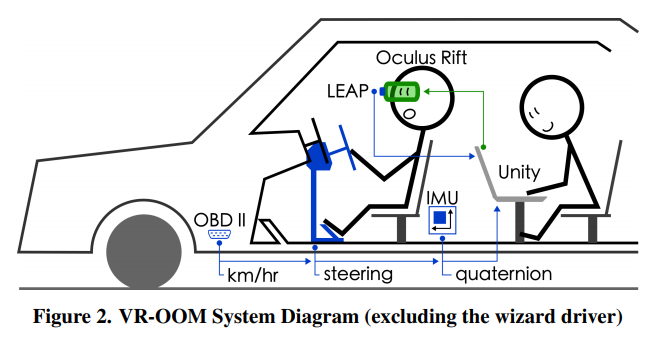

VR-OOM: Virtual Reality On-Road Driving Simulation

David Goedicke, Jamy Li, Vanessa Evers, Wendy Ju

ACM Conference on Computer-Human Interaction, Montreal, Canada, April 2018

Driving with the Fishes: Towards Calming and Mindful Virtual Reality Experiences for the Car

Pablo E Paredes, Staphanie Balters, Kyle Qian, Elizabeth Murnane, Francisco Ordonez, Wendy Ju, James A Landay

Proceedings of the ACM on Interactive, Mobile, Wearable, and Ubiquitous Technologies 2(4), 2018

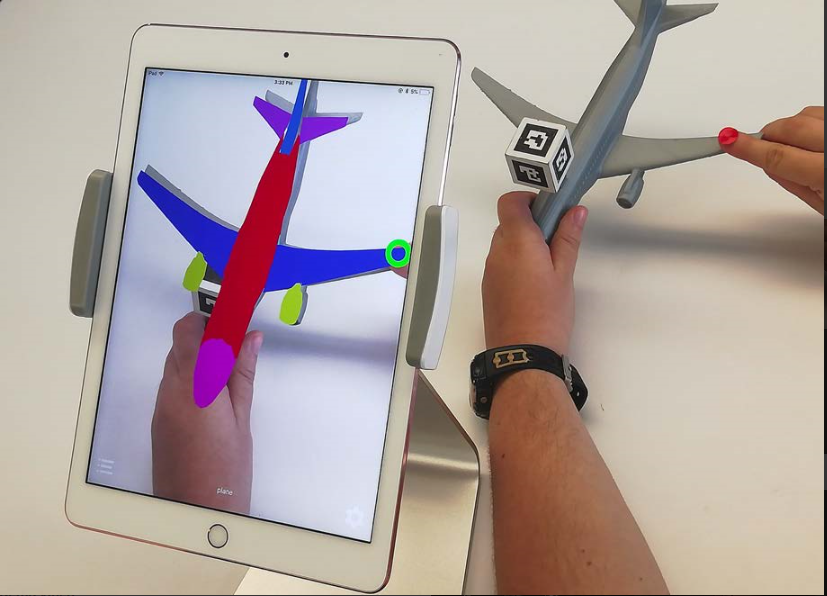

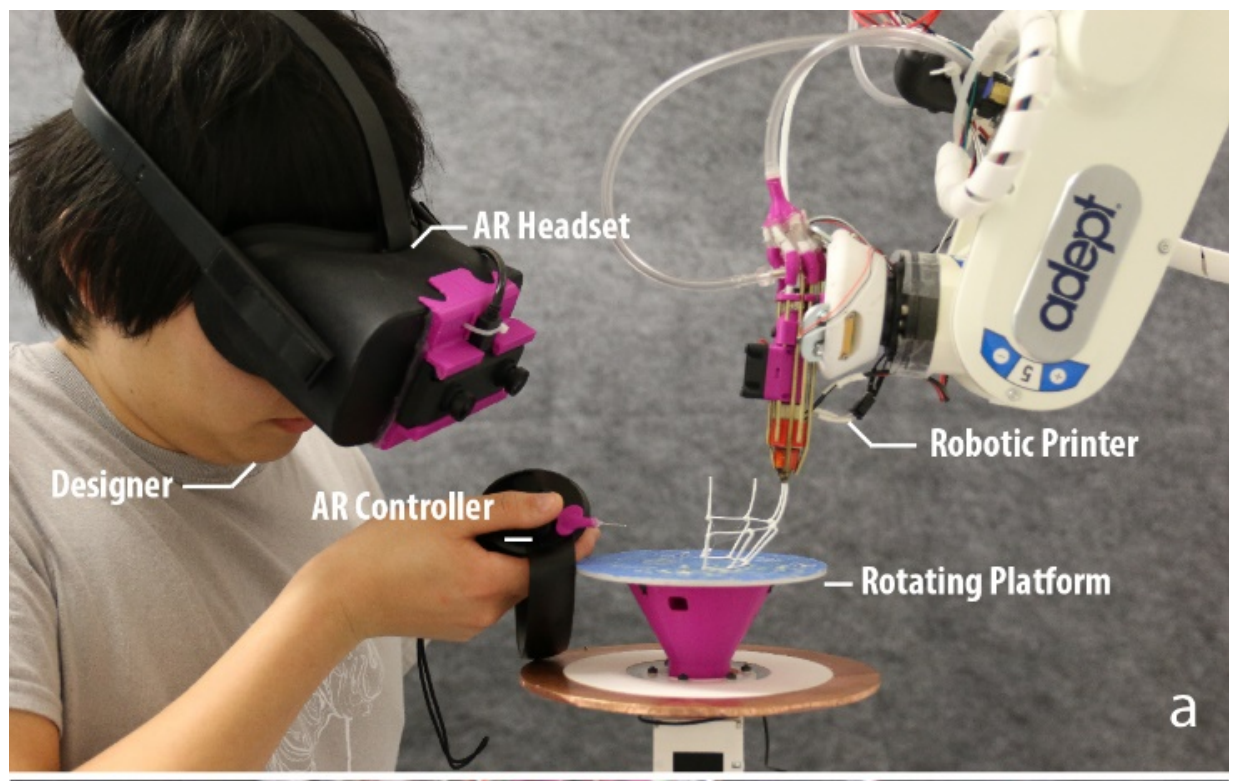

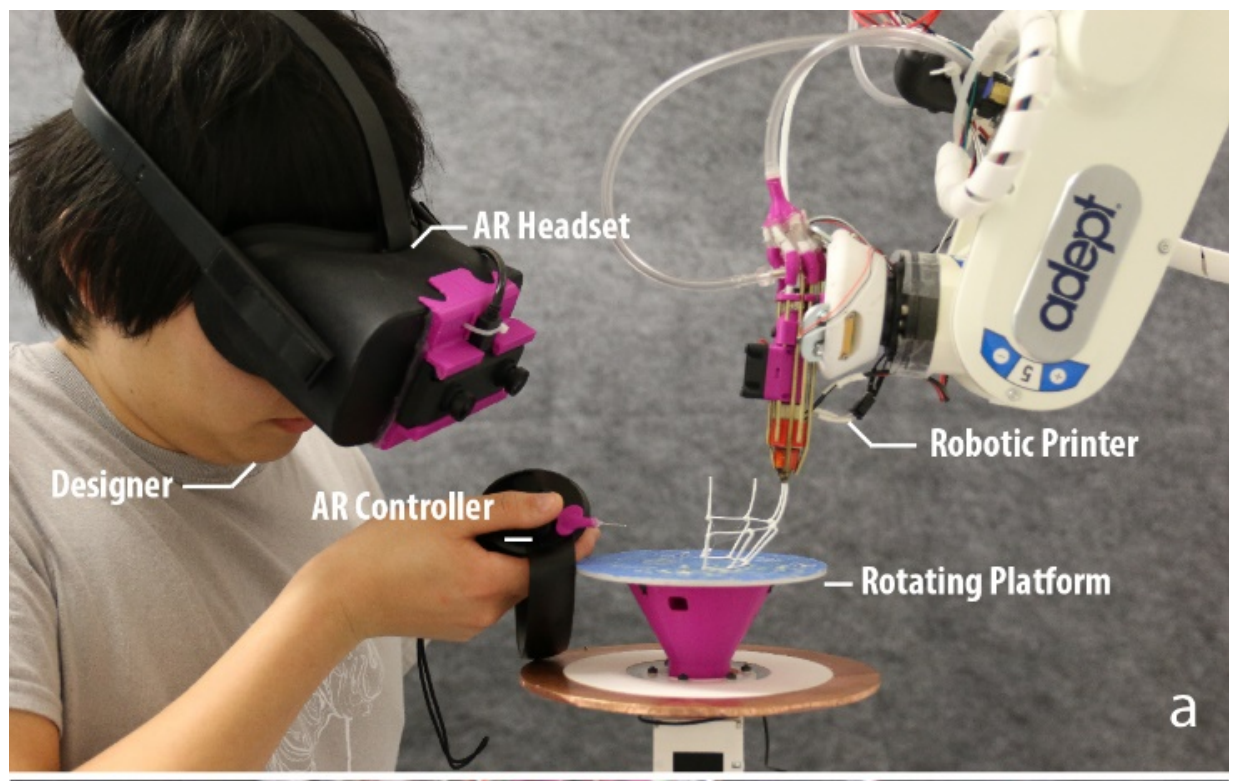

RoMA: Interactive Fabrication with Augmented Reality and a Robotic 3D Printer

Huaishu Peng, Jimmy Briggs, Cheng-Yao Wang, Kevin Guo, Joseph Kider, Stefanie Mueller, Patrick Baudisch, Francois Guimbretiere

ACM Conference on Computer Human Interaction (CHI), Montreal, Canada, 2018

Virtual Reality Exposure versus Prolonged Exposure for PTSD: Which Treatment for Whom?

Aaron M Noor, Derek J Smolenski, Andrea C Katz, Albert A Rizzo, Barbara O Rothbaum, JoAnn Difede, Patricia Koenen-Woods

Depression and Anxiety 35(6), 2018

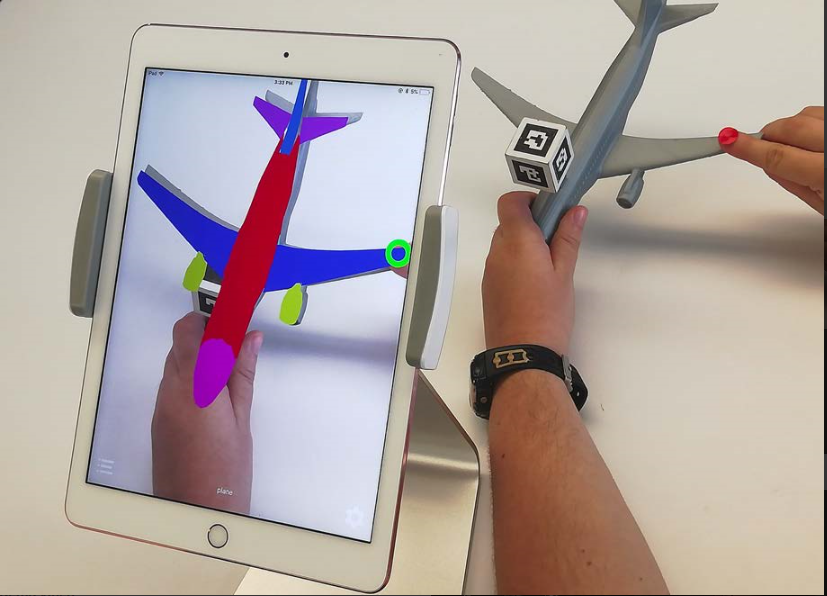

AR For Equine Radiography Training

Allison Buck and Julie Powell

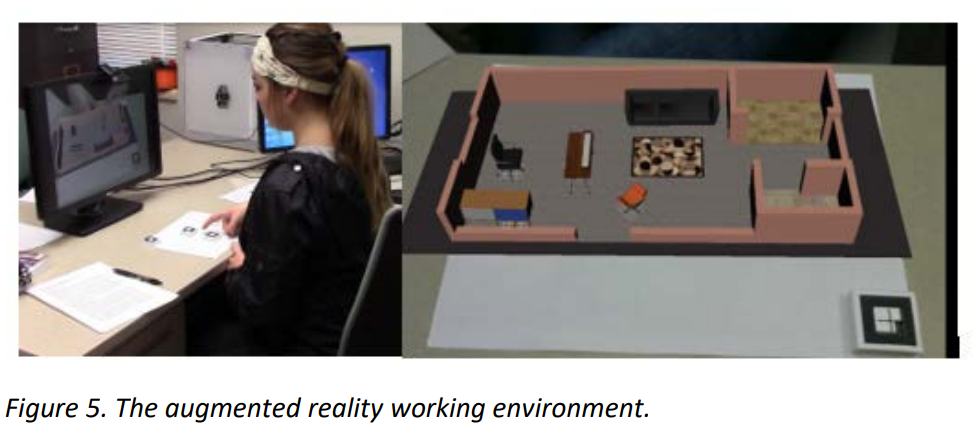

Developed at the Cornell College of Veterinary Medicine, the Augmented Reality (AR) application allowed students to practice a notoriously difficult concept to learn: visualizing and capturing radiographic views of the equine carpus. Presented with a case-based scenario, students worked in small groups to evaluate the equine patient’s history and perform virtual diagnostic tests. During one of their virtual tests, the AR application prompted students to position their radiographic equipment (the iPad) relative to their patient’s forelimb to capture several images necessary for diagnosis. Within the application, students controlled the transparency of the forelimb to expose its underlying bony anatomy, thus allowing further review of the bones before positioning to take the radiograph. After students were satisfied with the views they took, using the screen-capture feature programmed within the app, they uploaded their results to the learning management system (LMS) to be graded by the instructor.

A Faust Based Driving Simulator Sound Synthesis Engine

Romain Michon, Mishel Johns, Sile O’Modhrain, Nick Gang, Nikhil Gowda, David Sirkin, Chris Chafe, Matthew James Wright, Wendy Ju

Proceedings of the 13th Sound and Music Computing Conference, Hamburg, Germany, 2016.

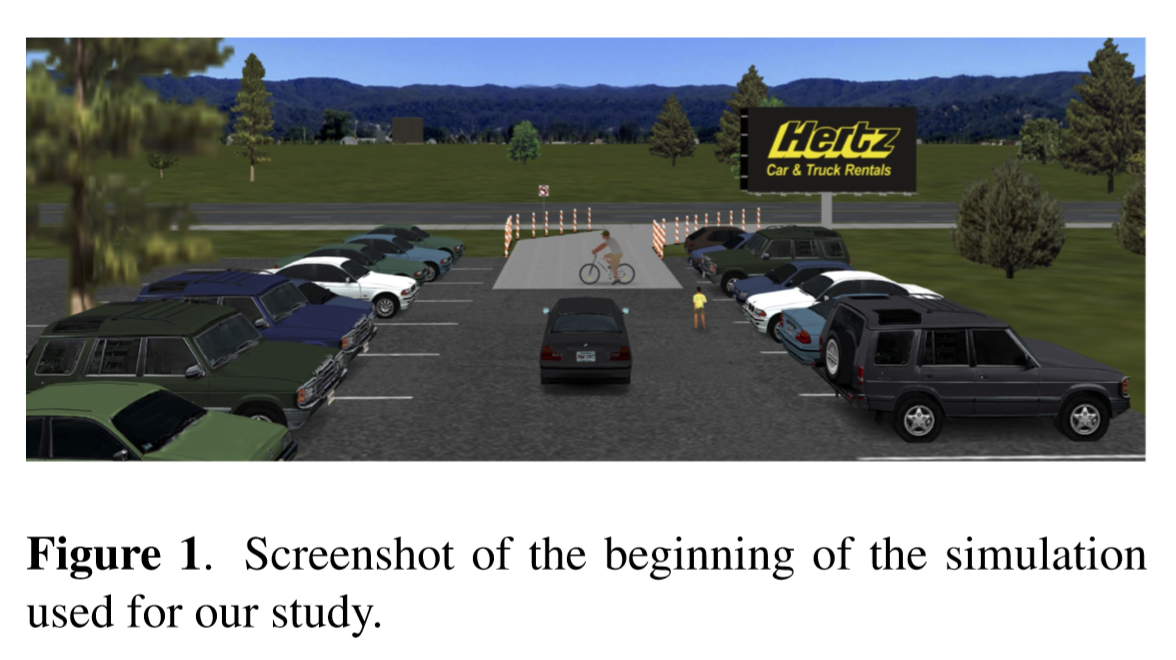

How People Experience Autonomous Intersections: Taking a First-Person Perspective

Sven Krome, David Goedicke, Thomas J Matarazzo, Zimeng Zhu, Zhenwei Zhang, J D Zamfirescu-Pereira, Wendy Ju

Proceedings of AutomotiveUI 2019

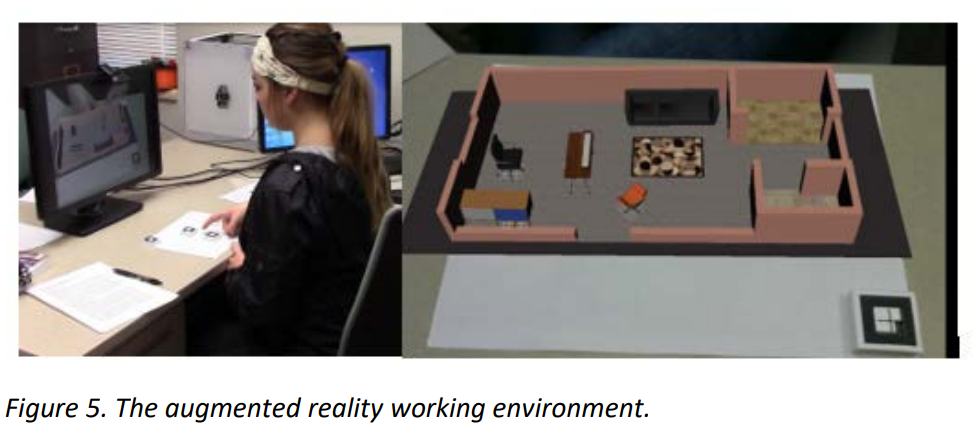

Augmented Reality, Virtual Reality, and their Effect on Learning Style in the Creative Design Process

Store Design: Visual Complexity and Consumer Responses

Ju Yeun Jang, Eunsoo Baek, So-Yeon Yoon, and Ho Jung Choo

International Journal of Design, 12(2), pp 105-118.

As in-store experience becomes increasingly important, retailers strive to create unique and memorable environments. A trend toward the goal is to emphasize decorative elements increasing store complexity, however, how such elevated store complexity would contribute to consumer response is yet to be explored. This study investigates the effect of visual complexity in a fashion store on affective/behavioral responses using self-report and psychophysiological measures. The findings provide novel understanding of the effects of the store’s visual complexity to consumers.

Effects of Detail and Navigability on Size Perception in Virtual Envrionments

Reza Sadeghi and So-Yeon Yoon

The International Journal of Architectonic, Spatial, and Environmental Design 10(3), pp 17-26.

Nonverbal Synchrony in Virtual Reality

Again, Together: Socially Reliving Virtual Reality Experiences When Separated

Cheng Yao Wang, Mose Sakashita, Upol Ehsan, Jingjin Li, Andrea Stevenson Won

Proceedings of CHI 2020

Curating a Decolonial XR FutureMuseum: An Exercise in Speculative Architecture

Tao Leigh Goffe

Extending reality and using mixed media this project meditates on the uses of virtual reality, augmented reality, and gaming to code and theorize a virtual museum in the future, the year 2350. In development as part of the Milstein Summer Program, we will work with students to ask in what ways video games offer a portal into another cosmology, another universe? In doing so we will redefine the intersection of artifact, art, archive, and metadata amplified by the virtual.

Ready Student One: Exploring the Predictors of Student Learning in Virtual Reality

Jack Madden, Swati Pandita, Jon P. Schuldt, Byoungdoo Kim, Andrea Stevenson Won, N. G. Holmes

PLoS one 15(3)

Supporting Self-Injury Recovery: The Potential for Virtual Reality Intervention

Kaylee Payne Kruzan, Janis Whitlock, Natalya N. Bazarova, Katherine D. Miller, Julia Chapman, Andrea Stevenson Won

Proceedings of CHI 2020

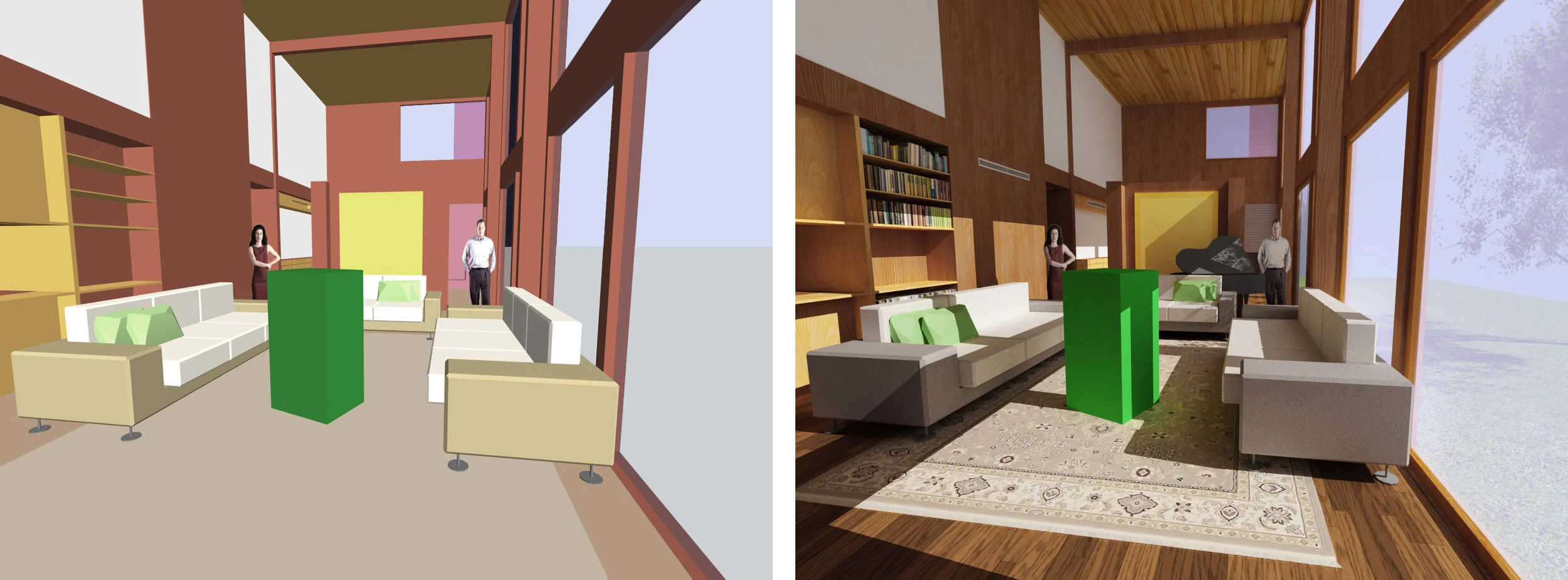

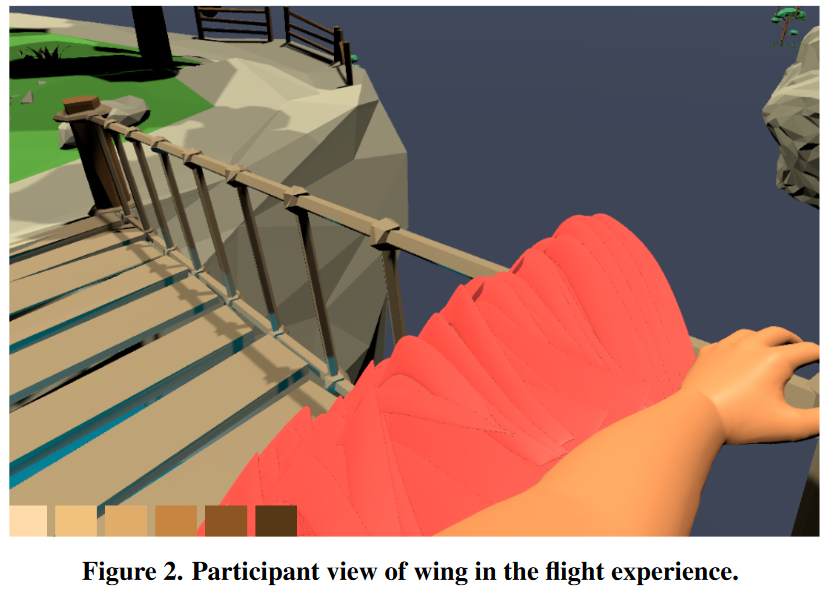

Virtual Environments for Design Research: Lessons Learned Use of Fully Immersive Virtual Reality in Interior Design Research

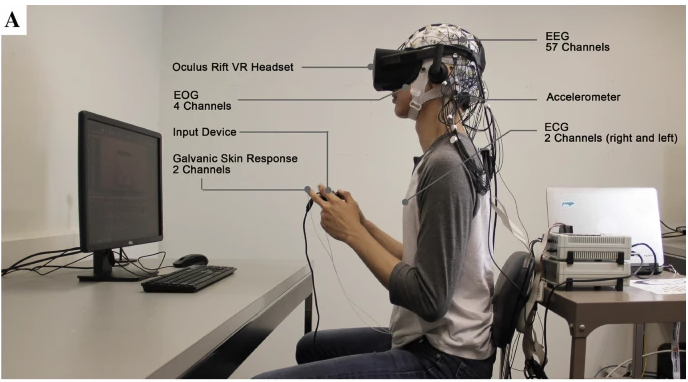

Comparing physiological responses during cognitive tests in virtual environments vs. in identical real-world environments

Saleh Kalantari, James D. Rounds, Julia Kan, Vidushi Tripathi, Jesus G. Cruz-Garza

Scientific Reports, 2021

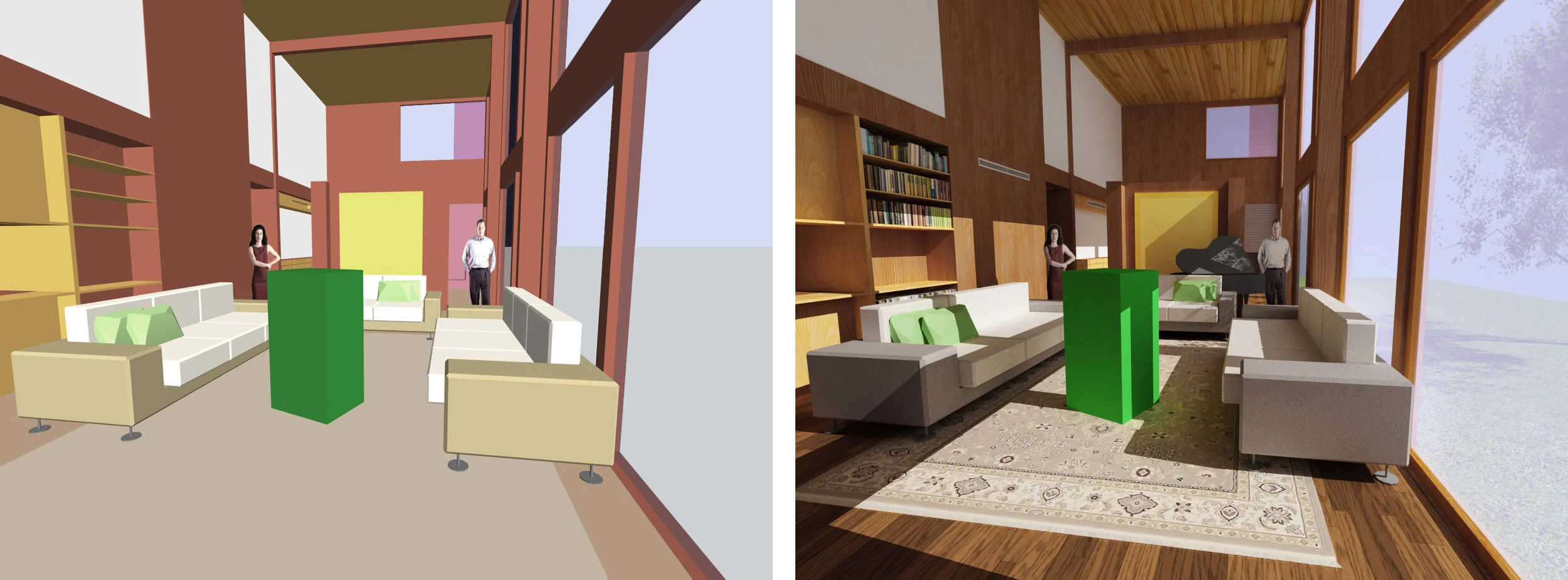

Immersive virtual environments (VEs) are increasingly used to evaluate human responses to design variables. VEs provide a tremendous capacity to isolate and readily adjust specific features of an architectural or product design. They also allow researchers to safely and effectively measure performance factors and physiological responses. However, the success of this form of design-testing depends on the generalizability of response measurements between VEs and real-world contexts.

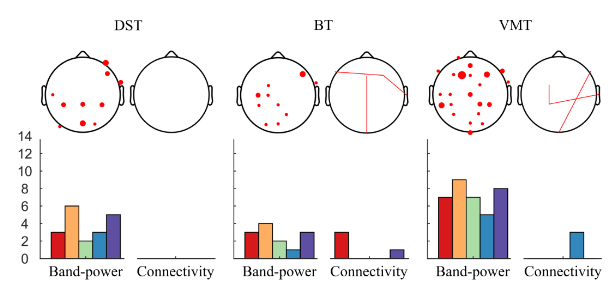

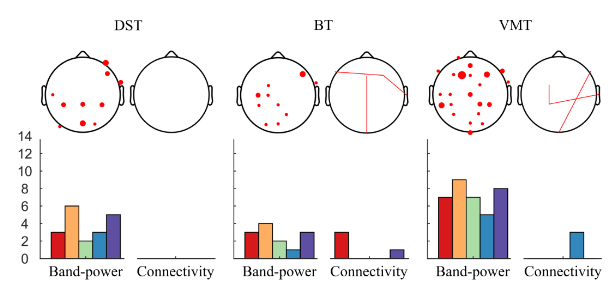

EEG-based Investigation of the Impact of Classroom Design on Cognitive Performance of Students

Jesus G. Cruz-Garza, Michael Darfler, James D. Rounds, Elita Gao, Saleh Kalantari

ArXiv Pre-Print, 2021

This study investigated the neural dynamics associated with short-term exposure to different virtual classroom designs with different window placement and room dimension. Participants engaged in five brief cognitive tasks in each design condition including the Stroop Test, the Digit Span Test, the Benton Test, a Visual Memory Test, and an Arithmetic Test. Performance on the cognitive tests and Electroencephalogram (EEG) data were analyzed by contrasting various classroom design conditions. The cognitive-test-performance results showed no significant differences related to the architectural design features studied. We computed frequency band-power and connectivity EEG features to identify neural patterns associated to environmental conditions. A leave one out machine learning classification scheme was implemented to assess the robustness of the EEG features, with the classification accuracy evaluation of the trained model repeatedly performed against an unseen participant's data. The classification results located consistent differences in the EEG features across participants in the different classroom design conditions, with a predictive power that was significantly higher compared to a baseline classification learning outcome using scrambled data. These findings were most robust during the Visual Memory Test, and were not found during the Stroop Test and the Arithmetic Test. The most discriminative EEG features were observed in bilateral occipital, parietal, and frontal regions in the theta and alpha frequency bands.

Evaluating Wayfinding Designs in Healthcare Settings through EEG Data and Virtual Response Testing

Saleh Kalantari, Vidushi Tripathi, James D. Rounds, Armin Mostafavi, Robin Snell, Jesus G. Cruz-Garza

BioArXiv Preprint, 2021

Wayfinding difficulties in healthcare facilities have been shown to increase anxiety among patients and visitors and reduce staff operational efficiency. Wayfinding-oriented interior design features have proven beneficial, but the evaluation of their performance is hindered by the unique nature healthcare facilities and the expense of testing different navigational aids. This study implemented a virtual-reality testing platform to evaluate the effects of different signage and interior hospital design conditions during navigational tasks; evaluated through behavioral responses and mobile EEG. The results indicated that using color to highlight destinations and increase the contrast of wayfinding information yielded significant benefits when combined with wayfinding-oriented environmental affordances.